Doing philosophy …

Let me begin, once more, with a question for my colleagues in philosophy: How can we spend a lifetime on a chapter in Aristotle and think we’re done with a student essay in two hours? Both can be equally enigmatic.

I have raised this question several times and received some very interesting answers. What the question, as well as the numerous justifications, clearly reveal, in my opinion, is the state of our reading culture. The difference between the amounts of time spent on such texts is, of course, often justified with regard to the professionalization of reading. Nevertheless, we as scholars and teachers are role models in our respective disciplines. So let’s take a closer look! At least with regard to the type of text (a piece of Aristotle’s work and a student paper), there should be no significant differences: both are, in a broad sense, scholarly texts. The truly significant difference lies instead in a social factor, which, following Miranda Fricker, could be described as an epistemic injustice. It is not any particular characteristics of the text, but rather certain presuppositions held by the community of readers that lead to this injustice. These presuppositions are not simply your or my private opinions about Aristotle, but are structurally embedded or institutionalized in a long history, namely in the form of an existing canon that prioritizes so-called classics over students.

Now you might say: Well, that may well be so. However, such presuppositions are external to the act of reading itself, contextual, incidental, so to speak, but not central to engaging with a text. The text, due to its inherent characteristics, must be decoded, so to speak, and thus stands, as it were, on its own. Objectively.

This almost classic objection is quite typical, not least in philosophy, but also in other disciplines, which is why I intend to focus primarily on refuting it. However, my aim here is not merely to engage in a petty feud. Rather, I consider the question of what reading is to be a fundamental question of philosophy. Surprisingly, with few exceptions, this question is almost never addressed in philosophy. Yet reading, especially the careful reading and reconstruction of written texts, is certainly among the core businesses of philosophy. But if you ask colleagues how they read, you often hear—and this is no joke—”I just read.” It seems to me, however, a major oversight not to specifically consider the conditions of one’s own activity, that is, the reflexivity inherent in reading. In keeping with my long-term project with Irmtraud Hnilica, my thesis that reading is a social practice means precisely what the aforementioned objection denies: that social factors in reading are not merely incidental, but central to reading and the development of quite different reading cultures.

In the following, I would therefore like to first take a look at our reading culture, which promotes the aforementioned objection insofar as it considers texts to be something objectively given. Here, I am interested in the question of how and since when we have considered texts to be something objectively given. Secondly, this question will reveal that the assumed objectivity of texts is an illusion. Thirdly, I would like to outline what I consider reading to be. To help you prepare, I’ll tell you now that we might best understand reading by considering it in analogy to singing songs, namely as a cyclical and ritualized activity. It is the characteristics of this social activity that produce objectivity. Fourthly, I would like to suggest how the persistent illusion leads to a degenerative mechanization of reading. Finally, I would like to ask how this approach could help us in practice to understand our own and other reading cultures.

1 On the Foundation of Objectivism in Philosophy

Let’s begin again with the objection depicting texts themselves as objectively given. If we take this objection seriously, then there should be striking differences between the texts of a student and those of Aristotle, differences that justify the varying effort required. However, even before we can look into the texts themselves, the past, our very own past, will catch up with us. Whether we like it or not, we are standing in a tradition that treats certain texts as sacred. Aristotle, as an author, belongs to this tradition; for almost 1000 years he was considered philosophus, the philosopher par excellence. Even his fiercest opponents attempt to read his texts as the consistent pronouncements of a genius. The sacralization, or, to put it more cautiously, canonization, of Aristotle’s and other works has been followed, at least since the Enlightenment, by a distinctly different reading culture. Against the comprehensive commentary literature of antiquity and the Middle Ages, there is a recurring and increasingly emphatic push for the suppression of close reading by the cultivation of so-called independent thought. For example, Schopenhauer* writes:

“When we read, someone else is thinking for us: we merely repeat his mental process. It is like when the student learns to write with the pen going over the pencil marks of the master. So when one reads, most of the thought-activity has been removed from him. Hence the palpable relief we perceive when we stop to take care of our own thoughts and move on to reading. While we read, our head is truly an arena of unknown thoughts. But if we take away these thoughts, what’s left? So it happens that those who read a lot and for most of the day, in the meantime relaxing with a carefree pastime, little by little lose the ability to think – like one who always rides a horse and eventually forgets how to walk. This is the case of many scholars: they have read to the point of becoming fools.” (Schopenhauer 1851, § 291)

Interestingly, Schopenhauer’s pessimism regarding reading is motivated by concerns similar to today’s warnings against social media, which simultaneously assert the decline of our reading and thinking abilities. If Schopenhauer were right, perhaps we should give up reading altogether, shouldn’t we? But it is precisely the assumption that a text contains the thoughts of others, which we merely follow through reading, that solidifies objectivism in relation to texts. Not surprisingly, certain texts were considered harmful. As early as the late 18th century, there was much criticism of “Lesesucht” (reading mania), particularly in Germany, with young people and women being considered “at-risk groups” in particular. At the same time, the historical-critical method was established in theological and historical disciplines. And in philosophy, alongside a methodologically grounded canonization of classics, notably by authorities like Kuno Fischer, the beginning of the 20th century saw a distinct renaissance of the efforts of the early modern Royal Society to establish an ideal language for the sciences, promising corresponding texts as objective reference systems for describing the world.

One characteristic we still share with the early 20th century is the idea that written texts can be rationally reconstructed by separating arguments from historical and rhetorical embellishments. This allows one to move directly from the surface of the text to its deep structure, to note the logical form, and to reformulate the core statements into premises and conclusions. This idea naturally suggests that the argument is embedded in the text and that one can search for it there—after some introductory instruction. Accordingly, much of current philosophy didactics is concerned not with reading itself, but with the analysis of arguments. Meanwhile, the wave of Critical Thinking, understood in this way, has also spread beyond philosophy to all those who want to teach any kind of competence.

Of course, one should learn how to analyze arguments, but one should also know precisely what one is doing. One is offering a specific translation through omission and substitution. On the one hand, it is claimed that the argument is contained within the text, but on the other hand, that the argument remains invisible without translation. Beginners are often led to believe that there should be one correct reconstruction.

Let’s take a closer look. To illustrate this, let’s consider the famous last sentence from Wittgenstein’s Tractatus Logico-Philosophicus: “Whereof one cannot speak, thereof one must be silent.”

– Firstly, you can interpret the sentence positivistically: as a restriction to what can be meaningfully said by the natural sciences. (In this case, you interpret the “must” as descriptive.)

– Secondly, you can interpret the sentence mystically and ethically: as a prioritization of the unspeakable as what is truly important. (In this case, you interpret the “must” as normative.)

– Thirdly, you can interpret the sentence as self-contradictory and, in this sense, therapeutic: because it speaks precisely of something about which one cannot speak. (The “whereof” names something that is indicated as unspeakable in the reflexive pronoun “thereof”.)

These interpretations contradict each other, but can be validated not only by the quoted sentence, but also by the contexts of the Tractatus and later writings. Once you have seen how many conflicting reconstructions of this and other classics exist, you might be quite puzzled by the idea of textual objectivity. It’s clear that the analysis of relevant arguments relies heavily on communication between logically trained readers, where the original text itself is often seen as an obstacle. Instead of focusing on how the negotiation process between readers shapes the reading experience, however, the approach remains one of optimizing the reconstruction of a classic. What emerges could easily be described as fan fiction.**

If we take this seriously and not merely as polemics, it becomes clear that philosophy, in certain schools of thought, is indeed in close proximity to entirely different literary genres. But even the insistence on philologically rigorous reading generally takes the text as the source of the doctrines and modes of thought derived from it, as is also suggested by the general distinction between primary and secondary texts. Overall, this assumption regarding reading can be described as objectivism. But how should we understand this objectivism in our reading culture?

2 The Text as a Possibility of Readings in Interpretive Communities – Objectivism as an Illusion

By objectivism, I mean the assumption that what we believe we have gleaned from the text is actually found within the text itself. On the one hand, this is a correct assumption, because all readers will confirm that they derive their interpretations from the texts. Of course, it must be added here that a text can indeed be read as a chain of propositions that are decodable and whose presence most readers will be able to agree upon. On the other hand, however, it is a misleading assumption, as can be seen from the fact that there are endless disputes about interpretations. Just think of the Wittgenstein quote. If this is true, then objectivism is, on the one hand, correct, but on the other hand, misleading. On the one hand, correct, on the other hand, misleading? Am I contradicting myself here? – Please bear with me. To resolve this apparent contradiction, we must recognize that a text is not identical to its reading. The text is a possibility for reading, while reading is the realization of the possibilities inherent in the text. Following James Gibson, we can speak of affordances that the text offers. As Sarah Bro Trasmundi and Lukas Kosch have shown, a text offers you various possibilities for action or reading. Which possibilities you ultimately choose in your reading depends on further factors. These factors are—so I argue—primarily social. Specifically, this means that whether you read a text in one way or another, and thus what meaning you derive from it, depends on your interactions with other readers.

Of course, you usually don’t even notice this because—especially in our reading culture—you are often alone with a text. But fundamentally, you were never truly alone with a text: As a child, you were, hopefully, read to. As a student, you were constantly corrected by others. And now, now that you’re an adult, you hear voices. Not in a pathological sense. The interactions with other readers are simply mostly implicit, solidified into habits, even traditions. Following Stanley Fish, I would like to call a group that shares certain interpretive habits an interpretive community. Fish locates the negotiation of meaning for texts within corresponding “interpretive communities.” You’ve learned to read menus, and you know what to do with them. And you wouldn’t mistake a menu for a poem, would you? Even before you skim the essay on your table, you know that it contains an argument because it’s a philosophical text—and if it didn’t contain an argument, it wouldn’t be a philosophical text at all. That’s how the tradition of your interpretive community dictates it.

(Taken from this quite insightful podcast.)

It is precisely the fact that a text is not identical with its reading, but rather offers possibilities for reading, that makes us prone to objectivism. The customs of certain interpretive communities are thus presented as properties of the text itself. From this perspective, objectivism with regard to the texts themselves is an illusion.

Now you might say: “Oh, it’s not so bad. Whether I believe I find the customs in the community or in the text itself is irrelevant; the main thing is that I find them!” – That may well be true. However, it becomes a problem when you are looking for something but expect to find it in the wrong place.

3 What Really Generates Objectivity – Reading, just like Singing

So how does reading work? Of course, much can be said about it. But essential points can be understood by considering reading in analogy to singing songs. Let us first return to objectivism.

Written texts have two important properties, it seems, which we also attribute to objective objects: constancy or repeatability and shareability. When I close a book, it seems the text is there constantly, or at least I can read it repeatedly. And when I lend you the book, it seems you can read the same text as me. Thus, these properties of repeatability and shareability seem to be inherent in the text itself.

On closer inspection, however, the matter is different. The aforementioned advantages also arise in a seemingly non-representational activity like singing. Listen to this:

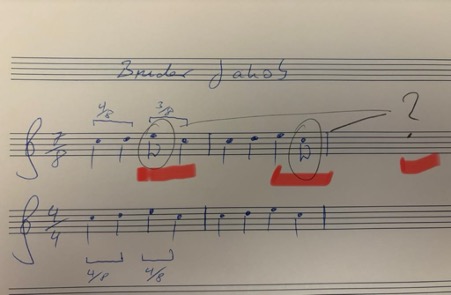

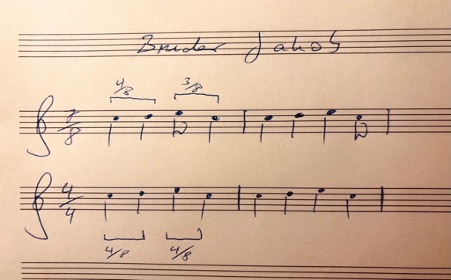

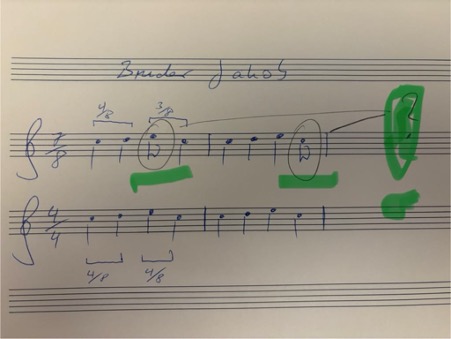

You just heard Bruder Jakob (Brother John, Frère Jacques)! Most of you will not only know it, but could also sing it effortlessly even if you were jolted awake at 3 a.m. Again, the song is consistently in your memory, and you can repeat it. Moreover, others can sing the same song. And you would even recognize it if someone sang it off-key or changed the rhythm.

The song thus possesses a certain objectivity; it is independent of our spontaneous performance and our imagination. But it doesn’t possess this objectivity because it is written down somewhere. If you listen closely, you’ll notice that, firstly, the song is played in a 7/8 time signature instead of the usual 4/4 time signature, and secondly, that it is much more richly harmonized.

The relational object, however, is not a text; there is no physical object to which you could point. Nevertheless, it seems to be an objectively given point of reference. What establishes objectivity despite all the variance is therefore not the physicality of the object, but rather these two properties: repeatability and sharedness with others.*** Sharedness, or rather, repeatability by others, plays the crucial role here. Why? Because without social sharedness, I could not be corrected in my repetitions. Alone, I could mistake any nonsense for a repetition.

Only in agreement with others can there be anything like a correct or genuine repetition. (This is the consequence I draw from Wittgenstein’s private language argument.) Only when you affirm that the 7/8 version is also “Bruder Jakob” is it considered “Bruder Jakob.”

For precisely this reason, in singing as in reading, it is not the physicality, but the shared repetition, that is, the correct repetition, that establishes objectivity. What singing and reading have in common here is that they are embedded in a long history of social interaction.

Like reading, you may have first experienced singing by being sung to, by it being repeated, embodied, shared, and perhaps even ritualized. Just as you were initially read to repeatedly in typical situations: reading and listening were embodied, perhaps in bed with a book and pictures. Shared, that is, perhaps by your mother, your father, perhaps with other children. And perhaps as a bedtime ritual that has shaped your expectations and structured the evening. Singing, like reading, is inscribed within you as a ritual, so to speak. That’s how we learn it. Reading is embedded in these biographical narratives, not just in an abstract tradition.

In my opinion, it is precisely these factors, and especially repeatability and shared experience, that lend objectivity to what is read, objectivity to which the text, much like a song, ‘in itself,’ offers only a possibility.

So what does this analogy with singing offer us? Firstly, it clarifies how, with regard to the factors of objectivity—repeatability and shareability—we ascribe an objectivity to texts themselves, which we actually derive from their social embeddedness; unlike texts, songs don’t have any discernible objects. Secondly, it points us to crucial social sites and situations: if we want to seriously engage with reading, with the negotiation of meaning among readers, then we must go to the places where this actually happens. Accordingly, a philosophical engagement with an 18th-century text would require us to examine epistolary culture, salons, and, more generally, the establishment of conversation as a site of thought.**** While it is quite natural for many of us to have conversations about texts, this form, conversation itself, arose at some point and—this is one of my conclusions from my central thesis—plays a decisive role in the meaning and use of texts pertaining to certain genres. Alongside peer-review processes, conversation is a crucial space where, not least, philosophical reading culture takes place. Accordingly, you can locate the different interpretations of Wittgenstein in very different discussions or communities: the positivist interpretation in the Vienna Circle, the mystical one around Elisabeth Anscombe, the therapeutic one, for example, around Peter Hacker.

The basic idea is thus: The objectivity attributed to texts is an illusion, suggested by properties of reading (actualizing affordances in the text) and projected back onto the text. Reading as a social practice is (like singing) repetitive and socially diverse. It is not the text, but social reading that creates objectivity.

As Suresh Canagarajah puts it: “Meaning has to be co-constructed through collaborative strategies, treating grammars and texts as affordances rather than containers of meaning. Interlocutors draw from other affordances, too, such as the setting, objects, gestures, and multisensory resources from the ecology. Thus, meaning does not reside in the grammars they bring to the encounter, but in the negotiated practice of aligning with each other in the context of diverse affordances for communication. In the global contact zone, interlocutors seek to understand the plurality of norms in a communicative situation and expand their repertoires, without assuming that they can rely solely on the knowledge or skills they bring with them to achieve communicative success.” This is precisely the point I am also trying to make: texts do not contain meanings, but rather offer affordances or possibilities.

4 The Consequences of the Illusion: The Degeneration of Objectivism into Mechanical Reading

If what has been said is true, then it is also possible that certain reading cultures will disappear or change. However, this does not necessarily mean that we will unlearn how to read, but perhaps only that the way we read and the places where meanings are negotiated can change. This is noticeable not only with regard to recent technologies, but also in everyday practice, especially in teaching. I believe, however, that the still widespread illusion that texts themselves are objective is leading to a degeneration in our reading culture. And here I come back to my initial observation that we might be living a scholar’s life with a chapter by Aristotle, while we spend only two hours on a student assignment.

This practice, which, incidentally, is also linked to increasing literacy, the so-called mass university, and the simultaneously stagnating number of lecturers, is initially perceived as stemming from external political pressure—and yet, it is increasingly becoming so entrenched that the guidelines for student text production—for instance, in the Netherlands and Great Britain—are themselves so schematic that one actually believes one can judge after 20 minutes of reading whether the requirements have been met. Such a mechanization of writing and reading is, of course, only justifiable if one believes that texts themselves are objective entities that are accordingly either good or bad. This mechanization is, incidentally, not a consequence of ChatGPT. Rather, it is the other way round: a reading culture that is changing in this direction consistently learns to use such technology.

From the Netherlands, I know that the mechanization of reading is already taking hold in elementary schools, where, from the very beginning, students are taught reading comprehension (begrijpend lezen) in order to test their knowledge of text structure in multiple-choice tests, and then people wonder why most young people have no interest in reading.

It seems that such uninspired role models lead to readers who, in turn, produce texts for exams that are hardly ever read. So why should anyone bother writing them themselves? Why bother reading them?

All of these are developments that, at least at universities, cannot be described independently of the introduction of New Public Management in the 1980s. (What good is it to tell a student to look at the text to understand it if there is hardly any interest in doing so outside of class? No, universities are not ivory towers; rather, cultural deserts have formed around them, in which we see primarily stakeholders instead of interpretive communities. But this criticism is nothing new and also a bit one-sided.)

Because, of course, there are places where the meaning of texts is still negotiated. We find them at literature and even philosophy festivals, on social media under #booktok, in often student-led reading groups, and, of course, in our teaching and research events. Here, reading is sometimes so explicitly social that it is actually performed. This, too, is not entirely new, of course. If we are interested in the foundations of reading, we have to go to these places. What is particularly interesting for our reading culture, I think, is that Large Language Models erode not only trust in the authenticity but also in the objectivity of texts. We are experiencing a massive desacralization of the text. Because unlike the divine authority presumed behind biblical texts, we now constantly suspect a deceptive demon. Accordingly, I believe that the academic rebellion against this desacralization is also a rebellion against the death of the illusion that texts themselves possess inherent quality. To name this desacralization does not mean falling for the grand promises of relevant AI product manufacturers. But we can also use this technology to sensitize ourselves to the fact that it is not the texts themselves, but our reading, our singing, our rituals that create meaning and make it something shared.

5 A Few Conclusions Regarding the Practice of Reading

What are the practical implications of these insights? How can we improve reading practices through such findings? Firstly, I would like to remind you that this research project on reading as a social practice is only just beginning. But if the meaning of texts in reading is essentially unlocked through the interactions between readers, then it helps not to stare at the text itself, but to always ask ourselves first: What do I expect from this text? What am I assuming it’s supposed to tell me? Is it supposed to provide me with an argument for something? What do I do if the text doesn’t meet my expectations? Should I humbly assume that I’m too stupid for it? That I don’t belong to the club of readers who say they understand or even love such texts? And why is this tome even on my desk or in my Adobe Reader?

Once you’ve confused yourself enough with these questions, you can actually look at the text and see what’s written there without immediately searching for “the argument”. People always say you shouldn’t just read, but read thoroughly: But what does “thoroughly” mean? Should I choose lots of colors to highlight the incomprehensible passages? Seriously: This instruction is about as helpful as telling you to concentrate. How do I do that? Stare into space and roll my eyes cleverly? – How do you even know when you’ve concentrated well enough? If you can say something that your conversation partner nods to politely in agreement? I can order from a menu, I can sound good with a poem, but what do I do with a philosophical text? When have I truly understood something? We can still only see this through conversation. – Is that enough, though?

Well, a fundamental insight that follows from these theoretical considerations regarding reading is that a philosophical text offers possibilities or affordances, and thus always different ways of reading it. It is a myth of completeness, particularly prevalent in analytic philosophy, that all implicit possibilities can simply be made explicit. Such completeness contradicts the necessary openness or underdetermination in texts. Think again of Wittgenstein’s famous quote. Another very memorable illustration of this is the duck-rabbit, which, in terms of possibility, remains precisely both. My project would therefore not be to reconstruct the one true argument, but rather to reveal different and potentially conflicting possibilities. Accordingly, one must accept that the text allows for various interpretations, which are only gained within different interpretive communities.

At this point, philosophers usually develop a typical fear of relativism. However, as Stanley Fish already noted, emphasizing possibilities is not about a relativistic position, but about plurality. Such plurality, however, by no means leads to arbitrariness. But what, then, are the limits to this space of possibilities? First, there are of course propositional limits: you cannot say that a text asserts non-p if it explicitly asserts p. Unless, of course, you perceive signs of irony. Here, the matter of limits becomes difficult again; and you will ultimately decide one way or the other. Furthermore, there are situation-specific conditions of appropriateness. If someone asks for directions to the train station, it’s not appropriate to respond, paraphrasing Robert Frost, by musing about less-traveled paths. Just as one shouldn’t sing Frère Jaques at an inaugural lecture or at a funeral. Or should one? Of course, we can break with conventions. For example, it’s entirely up to you whether you sing the song in 4/4 or 7/8 time signature, or even reharmonize it psychedelically with suspended chords. Convention gives you something to play with, or sing with.

Accordingly, Alva Noë makes a crucial point when he compares philosophical texts to scores for thinking, which can also be interpreted in very different ways:

“What the philosopher establishes in their labors are not truths or theses, but rather scores, scores for thinking with. … The philosophy lives for us like a musical score that we – students and colleagues, a community – can either play or refuse to play, or wish that we could figure out how to play, or, alternatively, wish that we could find a way to stop playing.”

I would simply add that, analogous to musical notation, philosophical texts can give rise to a multitude of interpretations. Here we don’t just have a single, obvious interpretation of a duck-rabbit, but a whole zoo with possible shifts in perspective.

Now, that may all sound very nice. But one mustn’t forget that interpretations aren’t chosen arbitrarily, but primarily with regard to social affiliation. If you choose an interpretation, you might belong to a club that’s currently out of fashion. The problem with my musings, then, is that they can be received in very different ways. Academics, in particular, fear reputational damage; therefore, they are very reluctant to admit their lack of understanding. “I don’t understand this text” usually is taken to mean something like, “The author is too stupid to explain it to me properly.” If, on the other hand, one can express genuine and sincere incomprehension, one has truly made progress. But such humility is something one has to be able to afford, so to speak. Therefore, it’s not enough to simply seek conversation; one must overcome one’s shame. You can’t learn to sing well if you’re too afraid of singing off-key.

But at some point, you can truly begin to name the difficult parts and ask yourself exactly where and why you’re stuck. Reflected confusion then becomes a genuine conversation starter. Because if a text offers the possibility of understanding it, it also offers the possibility of not understanding it.

____

* Thanks to Arnd Pollmann for pointing out this passage.

** I borrow this classification from Charlie Huenemann, but I forget in which of his posts it was introduced.

*** See on repetition in music and language Elizabeth Hellmuth Margulis’ On Repeat as well as Bente Oost’s vlog on this blog.

**** Thanks to Miriam Aiello for conversations on the topic of conversation.

When reading texts with lots of general remarks and little attention to detail, I often wonder whether it’s produced by ChatGPT or some other LLM. I don’t like this kind of suspicion, especially in the context of teaching and evaluating. Not least because it primarily targets the author rather than the text: Has the author used an LLM and hence tried to cheat? So rather than assessing the text, I am incentivised to make a moral judgment. This readjusts my attitude as a reader in a crucial way. Rather than trying to enjoy the flow of the text or get into the argument, I wonder about the honesty and sincerity of the writer. While there is currently much discussion about cheating with LLMs, the unease that the suspicion causes me brings quite another worry to the fore: my own classism. Am I really worried to be cheated on or that the poor souls relying on AI are not learning to think for themselves? Or am I not rather mainly worried that these bullshitting texts produced by AI are soon indistinguishable from the products of my authentic intellectual labour? Let me explain.

Tacitly cultivating classism. – Being what is called a first-gen academic, one might say I’ve earned my cultural capital the hard way. I still remember how I mind-numbingly practiced philosophical terminology at the age of thirteen, enjoing the cluelessness of my parents when I put it to use. Looking back, I think of myself as impertinent and cruel. Intellectualism doesn’t come across as thuggish as brute anti-intellectualism. But I would say that, wouldn’t I? More to the point, my intellectualism paved a way that seems now to be threatened by the fact that text production can be outsourced just like other kinds of labour. Intellectual work of certain kinds is indistinguishable from work outsourced to LLMs. Being annoyed by people’s use of LLM’s I don’t feel consciously threatened. But I do wonder whether it’s this class aspect that creeps into my judgement of those users.

What kind of work do we actually grade as instructors? – My hunch is, then, that what is behind my suspicion against certain writers who might have used LLMs is owing to a certain classism or class anxiety. If people can outsource intellectual work at least to a certain degree, I might end up suspecting (tacitly) that these people don’t belong where they claim to be. Now you might respond that part of this suspicion is fair in that it targets fraud etc. Yet, I’m not sure it is fair. Of course, when dealing with straightforward cheating, our responses might be justified. But most cases are not that straightforward, or so I suppose at least. Just consider the teaching context: We might say we’re distinguishing students who “have done the work” from those who didn’t. But making such a distinction seems to rely on the fact that some students actually “do the work” in relation to one’s class. Yet, what if we’re merely rewarding those students who have learned intellectual skills to produce great texts long before they set foot in our classes? In other words, we might not reward intellectual skills developed as taught by us but intellectual skills as picked up long before. So what are you grading in such cases? The things that people learned in your class or the things that people bring along? If you’re perhaps not actually assessing people’s progress in your course, then the question arises what’s so salient about the distinction between someone well-educated long before and someone making up for an earlier disadvantage by using tools like LLMs to improve their work.

AI use between shaming and rewarding. – My point is not to appeal to such classism to silence justified criticism of naïve integration of AI into teaching contexts (here is a pertinent open letter I co-signed). But classism is a real thing; and “AI shaming” seems to be a new way of exercising the related kind of gatekeeping. Now that people start noticing that AI shaming is on the rise, it doesn’t mean it’s just part of an arsenal of arguments in favour of Tech Bros (as this thread insinuates). The stigma of using AI for one’s work is as real as the problem of cheating and related vices. But that doesn’t mean AI usage is exhausted by this. The world we live in will increasingly reward using AI. As an instructor I’m primarily faced with downsides when students use it to cheat, but as soon as we’re not acting as professionals ourselves we might become quite dependent on the benefits of AI. Just step outside your comfort zone and hand over the task of reformulating a text with a pertinent perspective! Having drafted a couple of legal documents, for instance, I have found that ChatGPT is a helpful tool. Of course, I still need to check on points, but the Legalese produced by this device is of real help. But relying on such help will be shamed by the next best expert in legal matters. And then it’s me who is at the receiving end of AI shaming.

From texts to their producers. – If we take the class perspective seriously, AI is not only presenting us with challenges but with contrary assessments ranging from worries about fraud, on the one hand, to worries about inappropriate gatekeeping, on the other. So how can we respond to this situation? My hunch is that we first need to acknowledge that this technology changes our reading culture. For a very long time, at least since the critical philological work of the 19th century, we have learned to see texts as something objective in that they can be seen independently from their producers or authors (or the layers of production of texts). As Daniel Martin Feige noted, digitalization involves a striking return of the author (see part three of his Kritik der Digitalisierung). With the constant possibility of text production through LLMs, we will focus even more on the author and marks of authenticity again, whether we like it or not. But this doesn’t mean that we need to resign ourseves to constant suspicion.

Authentic versus bullshitting texts. – Turning to texts themselves, the crucial question for us will be whether such texts are authentic and genuine expressions by an author or bullshitting texts. In educational contexts, we have known long before the advent of LLMs that our grading systems incentivise bullshitting, with or without LLMs. So I’d repeat that we educators need to focus on actually reading rather than going for quick judgments. This would not merely mean assessing whether someone is cheating but to reflect on what we expect and on whether our expectations are mainly pertaining to class markers, as seems to be the case in many instances. The bottom line seems to be this: Our worry should not be about the use of AI or AI-prompted texts, but about bullshitting texts. This might still mean that our current reading culture (where we treat texts as something objective) might come to an end. But so be it.

CfP: Reading as a Social Practice. An Interdisciplinary Workshop

Berlin, 27-28 March 2026

Organised by Irmtraud Hnilica (Mannheim/Hagen) and Martin Lenz (Hagen)

According to a widespread consensus, we are currently living through a reading crisis. This workshop seeks to take a step back from the rhetoric of decline and instead raise the question of how reading itself can be conceptualised and approached from different disciplinary perspectives, particularly in philosophy and literary studies. To a first approximation, we propose that reading is determined not only by texts themselves or by individual readers, but mainly by the interactions between readers. We especially invite submissions engaging with this claim—whether through historical investigations of reading cultures, theoretical reflections on the social dynamics of interpretation, or analyses of contemporary practices in both analogue and digital spheres. We explicitly welcome submissions from scholars at all career stages. The aim of this international workshop is to spark new collaborations that will eventually result in a joint interdisciplinary network devoted to the study of reading as a social practice.

To submit, please email an abstract around 500 words to Martin Lenz (martin.lenz@fernuni-hagen.de) no later than 31 October 2025. Please use ‘Reading 2026’ as the header of your email. The email should contain a short bio of the author‘s details (including position and affiliation). We hope to notify you about the outcome by the end of November 2025.

The languages of the workshop are English and German. For each talk, there will be time for a 30-minute presentation, with about another 15 minutes for discussion. Upon acceptance, we grant reimbursement of accommodation and travel expenses.

***

CfP: Lesen als soziale Praxis. Interdisziplinärer Workshop

Berlin, 27./28. März 2026

Organisiert von Irmtraud Hnilica (Mannheim/Hagen) und Martin Lenz (Hagen)

Einem weitverbreiteten Konsens zufolge erleben wir derzeit eine Lesekrise. Dieser Workshop möchte einen Schritt zurücktreten von der Rhetorik des Niedergangs und stattdessen die Frage stellen, wie Lesen selbst konzeptualisiert und aus verschiedenen disziplinären Perspektiven – insbesondere in der Philosophie und Literaturwissenschaft – betrachtet werden kann. In einer ersten Annäherung schlagen wir vor, dass Lesen nicht nur durch die Texte selbst oder durch individuelle Leser*innen bestimmt wird, sondern maßgeblich durch die Interaktionen zwischen Leser*innen. Wir freuen uns auf Beiträge, die sich mit dieser These auseinandersetzen – sei es durch historische Explorationen von Lesekulturen, theoretische Reflexionen über die sozialen Dynamiken der Interpretation oder durch Analysen zeitgenössischer Praktiken in analogen wie digitalen Räumen. Explizit erwünscht sind Einreichungen von Wissenschaftler*innen aller Karrierestufen. Ziel dieses internationalen Workshops ist es, neue Kooperationen anzustoßen, die in ein gemeinsames interdisziplinäres Netzwerk zum Lesen als sozialer Praxis münden sollen.

Bitte senden Sie ein Abstract von ca. 500 Wörtern bis spätestens 31. Oktober 2025 per E-Mail an Martin Lenz (martin.lenz@fernuni-hagen.de). Verwenden Sie als Betreff Ihrer E-Mail bitte: Reading 2026. Bitte ergänzen Sie Ihr Abstract durch eine akademische Kurzbio mit Angaben zu Position und Institution. Wir hoffen, bis spätestens Ende November 2025 Rückmeldung geben zu können.

Workshopsprachen sind deutsch und englisch. Vorgesehen sind 30 Minuten Vortrag und je 15 Minuten Diskussion. Fahrt- und Übernachtungskosten werden übernommen.

“… interpretation is the source of texts, facts, authors, and intentions.”

Stanley Fish, Is There a Text in This Class?

Do you remember when you first committed some of your own thoughts to paper? Perhaps you kept a diary, perhaps you wrote poems or lyrics or crafted a letter to a friend. Perhaps you had worked on the aesthetics of your handwriting. Anyway, there it was. Something that you had written could now be read and, of course, misread in a distant place during your absence. This striking distance became even more evident to me when I had seen my words, not in my clumsy handwriting, but in the typeface of a word-processor. Imagining that someone would read my words not as my personal scribblings but as a text in an authoritative typeface, made me at once proud but also seemed to diminish my personal impact on the text. In any case, the absence or possible absence of the author from something written, I suppose, is what turns texts into something objective. As I see it, texts become objective when they can be read independently of the writer, of what the writer says and thinks. If this is correct, it seems that written texts are fundamentally different from spoken texts or thoughts. In turn, this makes me wonder whether it’s written texts alone that afford the interpretive openness allowing for different readings or interpretations as we know them in the humanities of our time. In what follows, I would like pursue some perhaps naïve musings on this issue.

Thinking versus speaking versus thought?

If you observe what you say in contrast to how you write, you’ll probably notice a stark difference between spoken versus written language. While academics sometimes seem to try and imitate the grammatical standards of their written language in their speech, we quickly notice that the grammatical rules, word choices and other aspects are vastly different. Pondering on this issue quickly brought me back to the ancient and medieval doctrine of “three kinds of language”, according to which thought is expressed through spoken language and spoken language is signified by written language. But once you notice how different already speaking and writing really are, it’s difficult to give much credit to said doctrine. The very idea that writing is a set of signs of what is spoken strikes me as a very impoverished understanding of the difference. This makes me wonder when written language was first considered as a set of signs independently from spoken language. Following Stephan Meier-Oeser’s work, my hunch is that William of Ockham and Pierre D’Ailly in their logical treatises are among the first to deem written signs as independent from spoken language. (Sadly, it’s not entirely clear why they hold this in contrast to many of their fellow thinkers.) Now, once you think of written language as independent from speech it seems that you acknowledge something that could be the objectivity of the written text. Of course, long before the written text is acknowledged as an independent signifier, there have been sacred texts like the Bible that were considered objective in some sense. But experiencing our very own writings as independent from our speaking must do something to the way we think about texts and their interpretability more generally, or so I think.

The written text as an objective ‘thing’

The way we encounter written texts or books (be it on paper or screens) seems to present them as distal objects, independent from how we interact about or with them. Like the table in front of you, the book on your desk or in your pdf isn’t altered when you look away. This experience is certainly at least in part responsible for the common assumption that texts and their meanings are stable items independently of us. Likewise, our experience of reading is commonly thought of as grasping something external to us or our interactions. But why? While I myself have begun to think that reading is in many ways a matter primarily dependent on interactions between readers, I equally wonder how written texts, non-sacred texts in particular, have earned the status of independent carriers of meaning that can be hit or missed. Our current reading practices inside and outside of academia seem to corroborate this assumption. – (What does it say? This is a question that silences classes but equally fosters the pretence that texts are stable unchanging sources of meaning that provide all the necessary constraints for possible interpretations. Yet, not knowing whether we’re reading a recipe or a a poem, we are probably unable to tell the genres apart without context. “Context” – this harmless little term obscuring all the greatly important factors allowing for recognition, and constantly underestimated as a “side issue” when it comes to competing readings!) But what does it take for a written text to be actually seen as independent in such ways?

The advent of ChatGPT

Investigating the question of the objectivity of texts will take some time. But currently it seems that this objectivity becomes undone in quite unexpected manners: the advent of chatGPT does not only call into question the production of texts through proper authorship. Rather, it also calls into question the independence of written language as a system of signs, thriving on a supposed text-world relation having been taken for granted for a very long time. Reading a piece of text, we can no longer presume that it was produced by a person having a relation to the world, to themselves and to other people making it a rational item, interpretable by rational beings, or simply readers.

How did we get here?

Where do I begin? I’m getting into a new genre, fairly new at least for me: autosociobigraphy. While the term seems to have been coined by Annie Ernaux (see here for a volume on the genre), this kind of autobiography is perhaps not entirely new: As I understand it, it is an autobiography that does not merely give an account of one’s own life, but also presents it as a sociological or political analysis.* So this genre doesn’t just add a bit of reflection. Rather, it seems to be designed as an approach to (social) reality through a first-person narrative. Of course, sociology is not the only discipline in which this kind of approach is a clear enrichment. But although we also see autobiographies of philosophers and receive them as philosophical works, the potential of this genre leaves much space to be explored.** So, how about an autophilobiography? After all, a decidedly personal approach opens up the possibility to give pride of place to experience – a concept much cherished but also often banned for a supposed lack of universality. A fairly recent attempt at an autobiographical approach to philosophy I can recommend is Michael Hampe’s What for? A philosophy of purposelessness (Wozu? Eine Philosophie der Zwecklosigkeit). Reading this made me think that this is what I, among other things, want: If you are a more regular reader, you’ll know that I attempt to use a decidedly personal perspective now and then to make a point (a perhaps obvious piece is the one on the phenomenology of writing). Yet, once we allow that the autobiographical approach doesn’t have to be implemented in an entire book, we can see that there are a lot of reflections that are worthwhile bits of philosophy in this sense. Let me give just one example:

Being interested in the history of music in the 20th century in particular, I swallowed Tracey Thorn’s Bedsit Disco Queen where she describes the music scene of her early years before Everything But The Girl was formed in Hull, England. This as well as her more recent Another Planet can certainly count as autosociobiographies. While Thorn is a great singer and writer, I was equally taken by her reflections on singing in her essay collection Naked at the Albert Hall. Reading her account of singing inspired me to reconsider Spinoza’s philosophy of mind and particularly his account of philosophical therapy (I just finished a paper draft involving Thorn and Spinoza). I think that Thorn, while describing her way into singing, is really expressing a kind of reflection that Spinoza would deem an approach to philosophical therapy in the sense of re-ordering ideas about what matters. Let me just quote from her first essay in Naked at the Albert Hall:

“When did you know you could sing? people ask me. How did you even start? Where does your voice come from, is it from inside your head or inside your body? … I’ve written before that it was a disappointment to me when I realised I wouldn’t be Patti Smith, but that was a little way off in the future when I first heard her in 1979. … My first reaction to Patti Smith was one of possibility. I wanted to be her because a) on the cover of the record she looked like a boy, and I felt that I pretty much looked like a boy, and she made looking like a boy a beautiful thing; and b) the first time I tried to sing along with those opening lines on Horses, I realised in fact that I could sound like her. … Low, dark boyish, it existed in a space that seemed familiar, and contained the echoes of the sound I was tentatively exploring in the privacy of my bedroom. Joining in with her I found that we did indeed occupy the same ground, and without knowing how or why I had an immediate sense of my voice ‘fitting’. Imagining this to be a conceptual ‘fit’, I of course believed that I sounded a bit like Patti Smith because we were alike, it was a metaphysical connection being made. And in doing so I fell into the first and most basic misconception about vocal influence – the idea that it transcends the physical. Now I believe that the reason she implanted herself into my imagination as my first vocal influence was the simple accident of vocal range … My perfect, ideal range. Still the place I most like to sing. … Almost the entire Horses album is pitched perfectly for me … Joining in with Patti on these songs was a joyous experience, utterly secret … The basic physical coincidence of our vocal ranges connected us not just ideologically, but physiologically. … The lungs propel air, which passes through the vocal chords, making them vibrate and producing the sound we use for either speaking or singing. But unlike any other instrument, these components are your own actual body parts, and the sound you make is both defined and limited by your anatomy. As an instrumentalist you might practice and adapt your technique in order to follow the style and sound of players you like … But as a singer there is only so much you can ever do to adapt the sound of your voice to emulate singers. We label as inspirational those whose sound lives somewhere close to our own …” (Thorn 2015, 3-6)

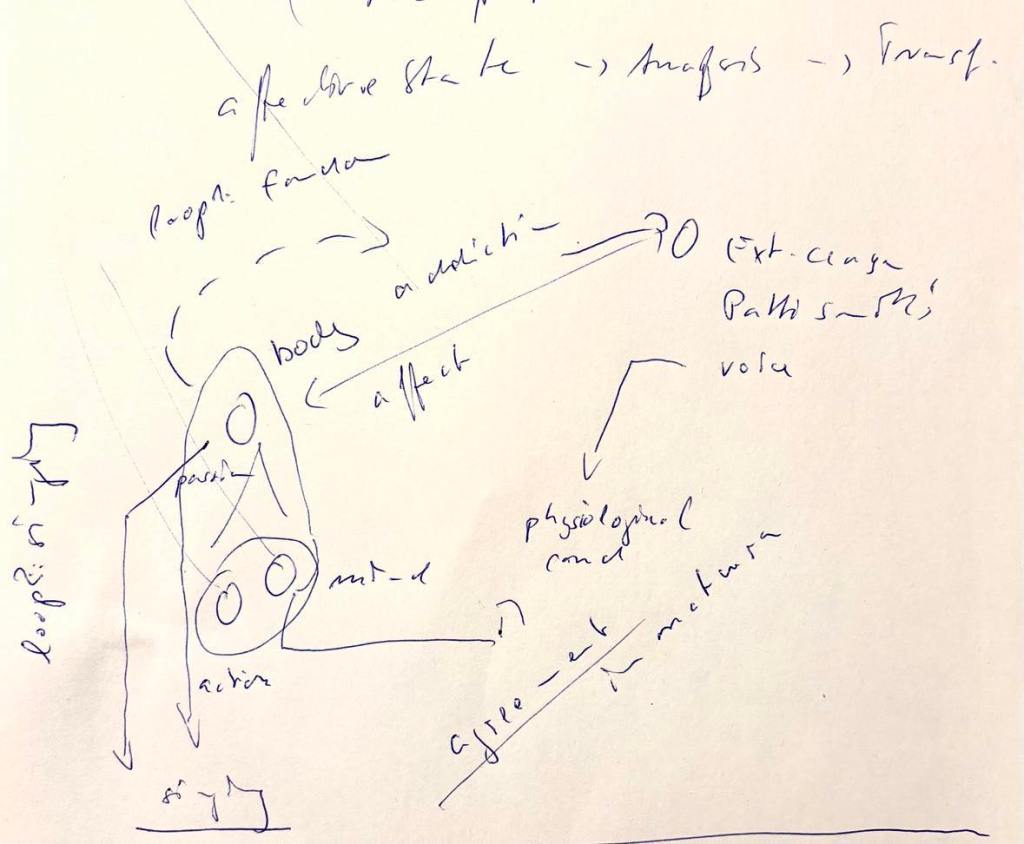

There is a lot in these observations. A couple of years ago, I took her observations as an anchor to think about active forms of listening to music. But now I think this goes deeper and exemplifies how we re-evaluate experiences and thus change (narratives about) ourselves. Apart from the aspiration and attraction depicted, it is clear that the author, Thorn, takes her body to be in agreement with that of Patti Smith, at least as far as the conditions for singing are concerned, and that that agreement is physiological. At the same time, the author realises that this agreement is easily confused with one of personality. But what she is describing is a common concept of her physiologically determined vocal range in agreement with that of Patti Smith. In Spinoza’s terms, Thorn, getting this more distinct concept of her physiological likenesses with Patti Smith, is revealing an agreement in nature. Arguably, while the initial exposure to Smith’s voice in 1979 is a confused attraction, the account Thorn gives in 2015 is a therapeutic disentanglement of the initially confused concept, distinguishing the external or distal cause, Smith’s voice, from the internal or proximate cause, her own voice, and the resonance between them, i.e. the agreement.

(An early attempt at scribbling down Tracey Thorn’s account as it relates to Spinoza’s Ethics)

____

* Dirk Koppelberg kindly corrected me on FB and offered the following charactrisation: “As I read Annie Ernaux, she does not “also” present a sociological or political analysis of her life but delivers an aesthetically arranged and composed description of it that illuminates certain sociological and political determinants of her life. This kind of writing seems to me an aesthetically challenging alternative to a sociological and political analysis in the strict sense.”

** The autosociobiographical approach will also figure in a project on reading as a social practice that I’m currently planning together with Irmtraud Hnilica. Stay tuned for updates on this project soonish.

It goes without saying that the title question is a bit of a provocation. Nevertheless, the point I want to make is that reading is first and foremost an interaction between readers and the ‘text itself’ comes second. It’s not just one of those weird hear-me-out appeals. Rather, I think that this insight should have repercussions on our practice of teaching and, perhaps, of reading.

Early Beginnings

Come to think of it, before you even learned to read, you probably have been read to! Be it by your parents or by mischievous siblings. At least I remember that, before I ever set eyes on a text myself, my mother used to read fairy tales to me, hoping I’d fall asleep. So my first encounters of reading were actually interactions, not so much with the text, but with the special reading voice of my mother. A reciprocal interaction: My mother would read; I would listen. My mother would stop; I would plead. Tell me, gentle reader, is my listening already a form of reading? I’m not sure. – Anyway. Likewise, learning to read at school involved first and foremost interactions with the teacher and the class. Here, however, the reciprocity would become slightly asymmetrical: I would not just try to make sense of the letters on the blackboard; I would be judged on my performance. I don’t remember much of it, but I still feel the excitement of internally gliding along with my inner voice trying to remember the alphabet correctly: A, B, C, D, E, F, G … H? I don’t actually remember whether we also had to learn to write the letters when learning to read them, but it feels like it must have been a related process. In any case, reading is taught through an interaction between teachers and pupils (and asymmetrically so), when actual texts are still a long way away.

Tacit Agreements in Reading

Let’s slowly move on to my claim then. My thesis is that at least a crucial part of reading consists in partly tacit and partly explicit interactions between readers. Why would this be so, though? Doesn’t reading mainly consist in grabbing a text and reading it? Well, before you actually pick up a text, you’ll be fed with assumptions about the genre. So you’ll know what to expect before you set eyes on the actual page or screen. If you enter a restaurant, for instance, the items on the menu won’t come across as strange poetry. Conversely, if you picked up a book from a poetry section, you wouldn’t take the text to offer a menu, even if there was talk of pizza and pasta on the page. And if you enter a philosophy class, you’ll of course expect to be offered philosophical texts. In any case, the habitually familiar settings already stir tacit expectations about the texts in question. I consider such settings tacit agreements between the reader and the provider of texts. If you enter a restaurant, you’ll expect a menu. If you enter a literature course, you’ll expect a literary text (or at least one dealing with literature). Questions (mostly on genre) will be raised if these expectations are frustrated. At this point, the crucial stages of interaction are about seeing whether expectations of genre are met or frustrated.

The Topic of Texts

Philosophical and certain literarary texts often thrive on a certain openness or even ambiguities. Unlike manuals or menus their understanding is not exhausted by being able to act on their content; that is, to build the shelves or order the soup successfully. This means that it’s often an open question what’s going on or what the text is actually about. Deciding on the precise topic of a passage or paper or book is thus often a matter of debate. This can even be true of your very own texts. (Agnes Callard once gave a nice example of her book as an Ugly Duckling by reporting on how she started out thinking it was on the weakness of will when it later turned out that she was really talking about aspiration.) So even if we’re clear about the genre of a text, we might remain unsure about its topic. In such situations, we might recommend all sorts of scholarly remedies: such as looking into the text in question, comparing it with other texts or some such straightforward means. However, what I think is really doing a great part of the work is the interaction with other readers. This doesn’t mean that the text plays no part in it. But the attempts at settling the topic will crucially involve an attempt to reach agreement with other readers, be they alive or part of a tradition of reading texts in a certain way.

The Triangulation Thesis

This idea has its roots in Donald Davidson’s so-called triangulation argument: Understanding linguistic utterances or the beliefs of my interlocutor involves not just understanding what object these utterances are about. Rather I need to interact with my interlocutor to fix the object in question in the first place. Jeff Malpas puts this point as follows:

“Identifying the content of attitudes is a matter of identifying the objects of those attitudes, and, in the most basic cases, the objects of attitudes are identical with the causes of those same attitudes (as the cause of my belief that there is a bird outside my window is the bird outside my window). Identifying beliefs involves a process analogous to that of ‘triangulation’ (as employed in topographical surveying and in the fixing of location) whereby the position of an object (or some location or topographical feature) is determined by taking a line from each of two already known locations to the object in question – the intersection of the lines fixes the position of the object … Similarly, the objects of propositional attitudes are fixed by looking to find objects that are the common causes, and so the common objects, of the attitudes of two or more speakers who can observe and respond to one another’s behaviour.” (Italics mine)

So while the object or Ding an sich is elusive, it’s being fixed in the interaction with the other. Similarly, I think that the topic of a text is elusive. Determining it requires triangulation with other readers. Once we admit that, we’ll see that becoming clear about our interlocutor’s assumptions and authorities as well as their relation to our own take on the text is a crucial element in reading.

***

Part of this idea has been presented at an interdisciplinary workshop on “Reziprozität” at the FernUniversität in Hagen. I’d like to thank Dorett Funcke for inviting me to present my musings at this occasion. Special thanks to Christian Grabau, Irina Gradinari, Irmtraud Hnilica, Tanja Moll, and Marija Weste for further discussions of this idea.

It’s true, even though I said that I wouldn’t worry too much about students using ChatGPT, a few doubtful cases have made me wonder what to do about it. I’ve seen a lot of good advice and discussion already (see e.g. this piece by Matthew Noah Smith among further discussions and resources), but nothing has quite convinced me for my own endeavours and settings. What I am particularly worried about is that some students might stop entirely with working through crucial hardships of writing: trying out formulations, thinking carefully about structure and terminology, setting goals, failing, revising, refining and trying again. Obsessing (especially in how we grade) about the quality of the product (the exam or essay), we might forget about the point of teaching writing. After all, it’s not the odd successful exam or essay but reflecting on shortcomings and setting priorities that will foster learning. As Irina Dumitrescu aptly puts it: “But the goal of school writing isn’t to produce goods for a market. We do not ask students to write a ten-page essay on the Peace of Westphalia because there’s a worldwide shortage of such essays. Writing is an invaluable part of how students learn. And much of what they learn begins with the hard, messy work of getting the first words down.” The main reason for emphasising such tasks, then, is not to torture students, but to teach them thinking successfully and affording control over the process of thinking. So before I set out my ideas for examination, let me briefly motivate my approach.

Two phases of writing. – As I see it, writing is a kind of decision-making. While (1) tacitly articulating things in one’s head and attempting to write them down might count as thinking, (2) coming down on a certain way of phrasing means to decide or commit oneself to a particular mode of expression. It’s crucial to see that these are two very different phases and the way from phase one to phase two might be very long and disparate. As a student, I simply could not get to phase two without very torturous and long processes of trying things out. And sometimes I would never even reach phase two. Other times, I would need to write down two to three pages in order to end up writing and committing to a phrase that I had initially formulated in my head. It felt like slowly working towards finally writing down a phrase legitimately that I had idly considered in the beginning of writing my text. (Even if you’re different from me and do everything in your head before writing down a single line, you need to practise weeding out bad formulations before.) When you do this in handwriting, the constant crossing out and revising remains visible. Today, computers and formatting allow even the most hapless scribbles to look like parts of a finished book manuscript. The perfection of layout suggests a perfection of presentation that leaves the traces of desperate revisions invisible. Coming to phase two, then, means to have ruled out plenty of unsatisfactory formulations and alternative modes of structuring. Arguably, shortening this process of phase one by jumping on the next best phrase or sidestepping it completely by leaving it to ChatGPT means sidestepping thinking altogether and ending up with at text that no-one ever decided on.

Accordingly, I want to discourage students generally from unreflectively holding on to the first form of words that passes through their minds. Rather, I’m looking for tasks that make students ponder on their work and encourage second thoughts. So I hope to design something that works even for students who are not resorting to ChatGPT or other forms of cheating.

What I want students to go through. – Is this a fitting expression, and what is left out in using it? Does this structure work, given the content? What would change if I presented things in a different order? What is the main point I need to get across? How did I come to think of this as the main point? Should I rather focus on a seeming side-issue? Etc. Between the blank page and a successful piece, there are so many things and versions and other potential pieces that might be equally successful. Despairing over such choices is a crucial part of the process of writing. Leaving it to ChatGPT means learning nothing, nothing at all about writing and about yourself, let alone about ways to find your voice. Drawing out the gloomy consequences of leaving thought-processes to machines, Maarten Steenhagen sees us heading “towards a de-skilled society. More and more, thinking itself is being turned into a service, a product that is offered by some company or other. When people look for answers or want to understand something, they turn to Google, Bing, or to social media. There, they are likely to find easily digestible, byte-sized snippets that will do for most practical purposes.”

So what are the tasks I’m going to try out in my courses? – How can I see and evaluate whether students thought about the presentation of their ideas? I guess by asking to do so explicitly. So in future exams and essays I will add two kinds of tasks to the standardly requested answers (or papers).

While these tasks are thought of in relation to exam questions, they could also be introduced in essays and other assignments. Here, they could easily be requested in the form of footnotes offering some self-reflection.

I don’t know if these and related tasks will prevent ChatGPT from being used and abused, but at least the request to invoke discussions that happened in class will be difficult to mimic for such a device. In any case, they would take some reflection for making the relation, ensuring at least some reflection on part of the student.

At this point, I’m just beginning to experiment with tasks that encourage reflecting on one’s texts. I’m pretty sure, there are many people who have already thought of this and related issues more thoroughly. (* I am particularly grateful to Sara Uckelman for sharing her reflections. You can follow up on these on FB.) Please feel free to add ideas or, as always, comment on the ones presented.

***

As it happens, this blog is now up and running for five years. So I’d like to thank you all for your continuous reading, encouragement, and discussion.

“I am touching on a point that I’ll soon leave behind again, since it relates to the profoundness that I intend to bypass, I mean the disparity between university and truth. To study medieval philosophy in a philosophical way one has to learn a lot, but one should not prioritise learning. As with any kind of philosophy, one has to ask questions. One has to have problems; one has to have confidence in being able to solve them; one still has to be on the move, wishing to make discoveries, wishing to learn something of vital importance from old books. This is countered by many intimidating experiences, especially during one’s studies. One loses this confidence if one is not encouraged. This encouragement comes only from others, from role models, from friends, from teachers whom one – let’s be frank – loves. Only among friends can one do philosophy. But if university career paths merely produce sober thinking clerks (Denkbeamte), then philosophy does no longer exist at universities. And without this spark you might still become a specialist in medieval logic – which is no small endeavour – but then medieval philosophy is not just dead but forgotten, too.”

Kurt Flasch, Historische Philosophie, 2003*

In times of increasing worries about ChatGPT and education systems more generally it’s soothing and inspiring to re-read some of the works of my teacher Kurt Flasch. Neither he nor my PhD supervisors Burkhard Mojsisch and Gert König were very good at preparing me for a career on the international job market, but they surely inspired some resilience against its crushing mechanisms. Re-reading the passage I translated above made me think about love of teachers again. Not in the recently well-rehearsed sense of academic ‘metoo stories’, but in the sense of what I’d like to call love as imitation. I know there are a lot more topics in the offing, but the idea of love in academia is, as far as I can see, perhaps the least understood.

So what does it mean to love a teacher? – Quite simply, to love one’s teacher means wanting to be like them. While it might involve interacting with them on some level, the crucial aspect is wanting to become like them, and that means, for instance, approach problems like them; speak, sound and listen like them; read like them or perhaps even enter into the form of life displayed by them – in one word: imitate them. (As I have argued earlier, love is, amongst other things, the ability and desire to understand another person. A strong way of understanding the other, then, is imitating them.) When I was a student, I had a couple of professors I really loved in that sense. I ended up following their courses, not primarily because I was into the topic all too much, but because I thought that, whatever they would teach, I would be learning something worthwhile. But how do you learn, how does that kind of love play out? While I was (back then) completely unaware what that meant, I just attempted to imitate them. This was quite palpable to me. When I wanted to pursue a certain (stylistic) approach, I would simply hear and try to imitate their voice in or their style when writing. – You might find this strange, but that’s probably what’s going on when we learn to find our voice in any kind of art, be it playing music, trying to paint or draw, or trying to speak and write.

Shouldn’t we aim at independence? – I guess the reason why imitation is so underrated in teaching is that we’re told to value independence. This is a fair point, but there are two issues that should be considered in response: Firstly, there is no independence without belonging. We’re not monads but always relating to a form of life and style that allows us (and others) to recognise that we’re engaging in the kind of practice we wish to engage in. How do I know I’m playing music if there is no one I’m relating to in my musicianship? Secondly, when we imitate we are never perfect imitators or impersonators – we end up appropriating and making things our own. So when I imitate my favourite teacher, you won’t hear Kurt Flasch but – willy nilly – an appropriation of his approach. In fact, the initial enthusiasm for pursuing something is fostered most by imitating a role model, be it a musician, an actor or a philosophy professor. In doing so, we might begin by rehearsing the things – half understood – we value most. After a while, though, we’ll find them pervading what we take to be our own voice.

Where to go from here? – Being a teacher myself, I think I should be aware of the facts surrounding the imitative ways of learning. After all, students don’t do as we say but imitate what we do. So if we act mainly as competitors on “the market”, students will see and imitate us in this respect. If we’re policing them as potentially fraudulent users of ChatGPT, they might follow suit. But what if we were to follow through with the idea that the best kind of philosophy develops in a community of friends?

_____

* Kurt Flasch, Historische Philosophie, 2003, S. 67:

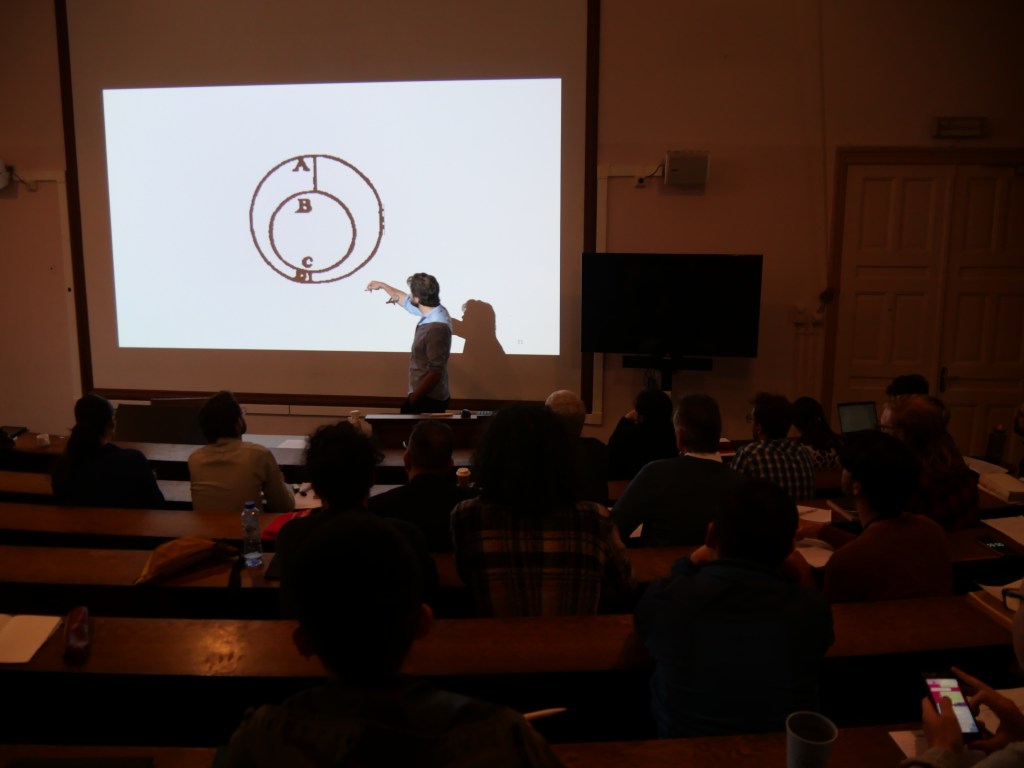

Had you asked me three weeks ago what historians of philosophy should focus on, I would have replied that there is too much focus on individual authors, be they canonical or underrepresented figures, and return instead, at least every now and then, to the question of how certain texts fare in debates or in relation to problems. However, that was three weeks ago. Last week, I co-organised and participated in a summer school on Spinoza, the fourth edition of the so-called Collegium Spinozanum. Having experienced this, I am all in favour of focusing not only on individual authors, but on canonical ones. The reason is not that the current diversification attempts are bad or wrongheaded. Rather, I see studying canonical authors as a means to an important end in its own right: building a (research) community. In what follows, I’d like to explain this in a bit more detail and also say some things about forms of interaction and support in academic contexts.

(Collegium Spinozanum IV – Photo: Irina Ciobanu)

Unacknowledged reasons for being canonical. – The case for or against studying canonical authors is often made for supposed greatness versus political reasons. Great authors, it is assumed, are deemed thus because they were “great thinkers” who still speak to our concerns. Underrepresented authors, by contrast, are taken to be either just “minor figures” or “unduly neglected greats”. There is much that can be said critically about such lines of reasoning, but what I’d like to stress now is that these reasons largely ignore the community of readers, i.e. the recipients. Focussing on reasons in the “object of study”, they obscure the point that a good part of the reasons for choosing such an object might lie in the recipients and their common interests. But arguably it’s these common interests that shape a real community, not the supposed “lacuna in the literature”. So when a number of people thinks that we should read Spinoza, this might not be triggered by Spinoza (alone) but by the fact that there is something that speaks to certain people at a certain slice of time. In any case, this was the feeling I had when listening to all the papers and conversations at our summer school: We form a real community in that we want to talk and understand each other – a feeling that was not just sustained through the week but also by frequent references of participants to earlier editions of the Collegium (see the FB page related to earlier events).

A common corpus and language for diversity. – Given the diversity of interests (ranging from well-rehearsed arguments in Spinoza to seemingly remote theological questions, from detailed historical reconstructions to actually practised meditations), reciprocal understanding required and found a common corpus and language in Spinoza’s works. We were mostly about 60 people in the room, with quite different leanings, but everyone had at least read Spinoza’s Ethics and understood how parts were referenced. This point is by no means trivial when you’re part of a group composed of people from very different academic stages (ranging from professors near retirement to third-year BA students) and various geographical regions. All too often, the diversity of assumed expectations and backgrounds silences people and lets impostor syndrome run wild. If you’re at a conference on a historical topic rather than a fixed author, you’ll shut up almost everyone when you steer the discussion to some notoriously understudied authors or areas. “Oh, you haven’t heard of this anonymous treatise from 1200? It’s quite important.” A relatively small corpus, by contrast, does not only facilitate the conversation, it ensures that I’m going to learn many new things about texts that I thought I knew inside out.

“Canonical” doesn’t mean “well-known”. – Let me return to this last point once more. The status of being a canonical author is often equated with the assumption that we know this author fairly well (and thus should enrich our historical picture by studying underrepresented figures). But this is only true insofar as we repeat canonical interpretations of canonical figures. Once we enter into new conversations and accept that what (at least partly) drives our questions is owing to the interests of the recipients rather than to “the object of study”, we can see why every generation must start anew or, in Sellars’ words, why “the probing of historical ideas with current conceptual tools” is “a task which should be undertaken each generation”. This point should not be underestimated. What we did during this recent summer school on Spinoza was having a vast number of philosophical conversations, trying to push the limits as far as we could see. We were talking mereology, necessity, demonology, intuitions, the evil, and at the same time wondering how Ricœur and Wittlich or we ourselves were faithful to Spinoza’s texts or whether Spinoza had lied to his landlady. In this sense, the reference to the canonical author does not reinforce canonicity, but works like crossroads and allows for striking out in all directions.

Ultimately, the focus is not the author but the community of readers. – The diversity of backgrounds as well as that of approaches should make clear that, ultimately, the focus of conversations is often not the author but the facets afforded by the interaction of the community. So the point of focussing on an author, a canonical one at that, is not to adhere to the canon or trying to restate ‘the intention of the author’. The point is rather what the author affords to us: growing into a community of readers, a corpus accessible across the globe, a common language to converse about many things we might only begin to understand.

Summary. – At the end of the collegium, I tried and failed to sum up what we achieved together. Instead, I could only quote a poem by Robert Frost that I wish to restate here:

The Secret Sits

We dance round in a ring and suppose,

But the Secret sits in the middle and knows.

(Collegium Spinozanum IV – Photo: Irina Ciobanu)

Local challenges for the summer school. – Since summer school participants do not have angelic properties, they do need all sorts of things, not least a place to sleep. At the time of the summer school, Groningen also hosted two concerts of an infamous German rock band, entailing that most available accommodation was booked out long in advance. Had it not been for our personal efforts and our colleagues from the university’s summer school office, Isidora Jurisic and Tatiana Spijk-Belanova, the summer school could not have provided accommodation for the participants. A lesson for the future is that a university town should probably balance its interests accordingly and take responsibility for leaving some resources for such events.

Thanks. – It doesn’t go without saying that this wonderful event wouldn’t have been possible without the participants, all attentive and present till the very last moment. In particular, I would like to thank my co-organiser, Irina Ciobanu, and the inventor of the whole affair, Andrea Sangiacomo, who ran the first three summer schools on Spinoza since 2015, as well as our keynote speakers who are, besides Andrea, Raphaële Andrault, Yitzhak Melamed, and Gábor Boros. Thanks also to the Groningen Faculty of Philosophy and to the German Spinoza-Gesellschaft for financial support.

Bringing philosophy to everyone through walking, talking, and making

Reflections on Sound, Life, and Literature

The Metaphilosophy Blog

A blog on (writing) philosophy

what it's like to move instruction online

Tidying up my bookmark toolbar by blogging

Karen Rann investigates the invention of contour lines

A Philosophy Blog

Philosophical Temptations

The Vim Blog ▽

Out of the crooked timber of humanity, no straight thing was ever made