Doing philosophy …

Today was the final session of my three-day course on medieval philosophy. As so often when I’m about to send my students home after difficult work on texts, I worry that they end up being mainly confused and at a loss when it comes to writing their papers. So today my doubts got the better of me and I talked them through a brief sheet I had prepared on the fly. The main idea was to hand out questions or tasks that they can use to work through the material in order to get from a mainly explorative phase to actually start a systematic writing process. After talking them through the sheet, I asked them to come up with a response to each item and sketch a paper or thesis based on it. After 20 minutes preparation time, we discussed three sets of replies. The point was not to give exhaustive responses, but to mark starting points. My hope was that the process could be facilitated by indicating (in brackets) what they actually had to do. The results left me stunned. Below, I’ll just leave you with the notes on the sheet.

Getting started

Throughout the course, I asked students to come up with what I call structured questions targeting a difficult paragraph or phrase in the text. So the starting point for the exercise is to look for a difficult passage and write down a structured question about it. Going from there, students had to pick a passage and work on the following items.

Preliminary questions (mostly settled during the course)

Operationalizing the question about the text:

Questions for your planned work:

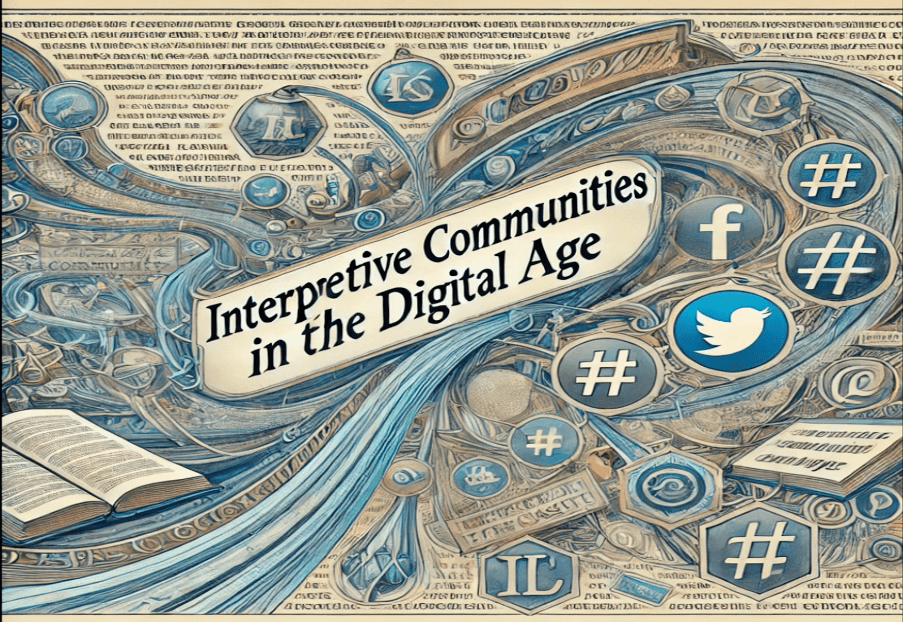

Imagine a tulip! Now let me tell you that every tulip is a flower. – What is the difference between these two previous sentences? Among the many differences, the first invites you, diligent reader, to do something, whereas the second does not. Arguably, the first sentence is somehow incomplete without your cooperation. You have to do some imagining and it is not set in stone that what you do is entirely predictable to me, the author of that imperative clause. For one thing, I can’t anticipate the colour of your imagined tulip. Although I’m almost certain that you imagined a coloured tulip, not a colourless one. In what follows, I’d like to suggest that certain (philosophical) texts demand our cooperation. But they do this not just in the sense that our reading might be less boring if we actively think along. Rather, our thoughts and experiences are part of the argument or project. I think this suggestion matters greatly in that this cooperative feature of texts is often ignored, especially in early modern texts. Hence, the way early modern arguments work is often misunderstood.

As some you know, I’ve just co-organised a workshop on reading as a social practice.* While my head is brimming with thoughts of my interlocutors that I have yet to work through, I’ll try to wind down by following up on an issue that had occupied my mind since I began pondering on my Socializing Minds and was brought to light again especially when listening to a brilliant talk by Dana Jalobeanu on “Interactive Reading of Francis Bacon’s Novum Organum” (you can find the abstract here). According to her, Bacon’s

“Novum Organum was actively read in a distinctive, collaborative manner that requires careful reconstruction. Focusing on the early Royal Society, I show that some of the virtuosi pooled their intellectual resources to decipher and interpret Bacon’s text. Their reading practices were not solitary acts of comprehension, but collective efforts to engage with, extend, and enact Bacon’s larger project. Rather than treating the Novum Organum as a self-contained treatise, these readers approached it as a repertoire of experiments (“instances”) and methodological exemplars, and as a “to-be-completed” fragment of the broader, unfinished Instauratio Magna. They became active collaborators, interpreting, testing, and “relieving” (Beale, 1666) parts of Bacon’s work, subsuming the results into their own projects.”

In the discussion of the paper, we considered whether the prompting of such interactive reading might have been a more common strategy. I vividly remembered Locke stating that he makes his argument for the origin of ideas dependent on the readers’ cooperation:

“I suppose what I have said in the foregoing Book will be much more easily admitted, when I have shown whence the understanding may get all the ideas it has; and by what ways and degrees they may come into the mind;—for which I shall appeal to every one’s own observation and experience.” (Locke, Essay II, I, 1)

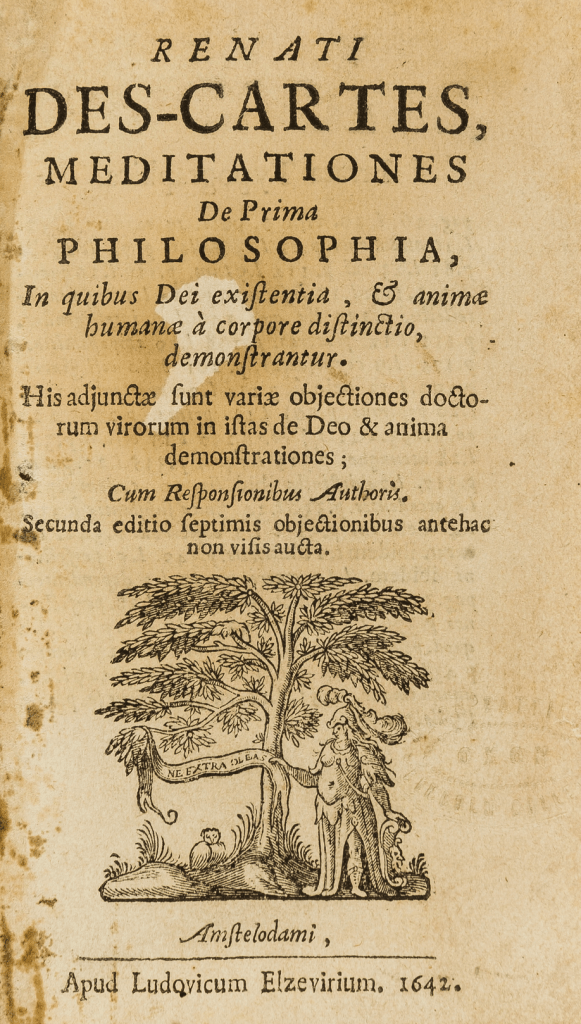

This appeal is not a mere trope. The argument relies on the readers’ experience. Likewise, Descartes’ Meditations are not a solitary exercise. Apart from the fact that Descartes was adamant that they be published with the Objections and Replies, he insists from the beginning that the reader meditate with him. (See this post and podcast for Andrea Sangiacomo’s take on meditation and the Meditations.)

As Catarina Dutilh Novaes has shown in her The Dialogical Roots of Deduction, logical and mathematical thinking have often been designed as dialogical activities in ancient and medieval contexts. While she paints an intriguing picture of this practice, she repeats the assumption that early modern authors pushed it to the fringes in favour of a mentalistic and individualistic understanding of reasoning (see this paper for a bit more discussion). As I see it, the prompts of collaborative reading show that this picture needs correction.**

At the workshop I asked Dana what she thinks might make us so ignorant of this collaborative strategy in early modern texts. Her reply was that readings inspired by 19th-century assumptions about philosophy being a systematic endeavour might have contributed strongly to this. Indeed, if you think that a text gives you a system, you will assume that it was designed with the aspiration of providing a systematic whole. Something that does not rely on the (contingent and only partly predictable) cooperation of the reader. In this systematic tradition, it’s the text that provides the argument, and the job of the reader is to read the argument off the text. If this assumption is correct, philosophical texts that come with the ambition of presenting a whole and perhaps closed system will involve fewer or no prompts to the readers (I haven’t checked for this properly, but it’s something that suggests itself).

Of course, this doesn’t mean that the 19th century leaves us with a final shift to systems or at least to texts that merely count on the readers’ silent comprehension. If you think of texts appealing to future readers, as for instance in Nietzsche or in attempts at ameliorative conceptual engineering, you recognize something like a trend of collaborative reading, named “philosophic prophecy” by Eric Schliesser. According to him, philosophic prophecy is involved in the business of coining concepts that “disclose the near or distant past and create a shared horizon for our philosophical future.” In this spirit, we might think of such texts as prompting collaborative concepts, arguments or entire collaborative projects. Accordingly, it might make sense to distinguish, inter alia, between texts that prompt active reading as opposed to texts that merely demand passive reading (at least for the fulfillment of the invoked argumentative purposes).

Why don’t we recognize this when we’re met with it, then? Does the common paper model, favouring the defence of claims, make us blind to these reading strategies? Be that as it may, perhaps the advancement of prompts for LLMs will raise awareness of this feature again.

______

* I’m enormously grateful to everyone at this fabulous workshop. Special thanks to Dana Jalobeanu and Valentina Sperotto for discussing this mode of early modern reading.

** Catarina Dutilh Novaes makes the following clarification (on facebook): “Just to clarify, my claim about the mentalistic conceptions of reasoning in the early modern period pertain primarily to logic, and how they talked about logic. It doesn’t mean that in their practices of reasoning, i.e. when engaging in philosophical inquiry, these authors were not themselves using dialogical, social strategies.”

On behalf of Irmtraud Hnilica and myself, I’m very happy to announce that our project on Reading as a Social Practice now has its own website. Since the project is intended to be ongoing for years and years to come, we’ll keep feeding the place with all sorts of news and findings. Additionally, we’re also hosting a facebook group on the topic. So please pay a visit and spread the word.

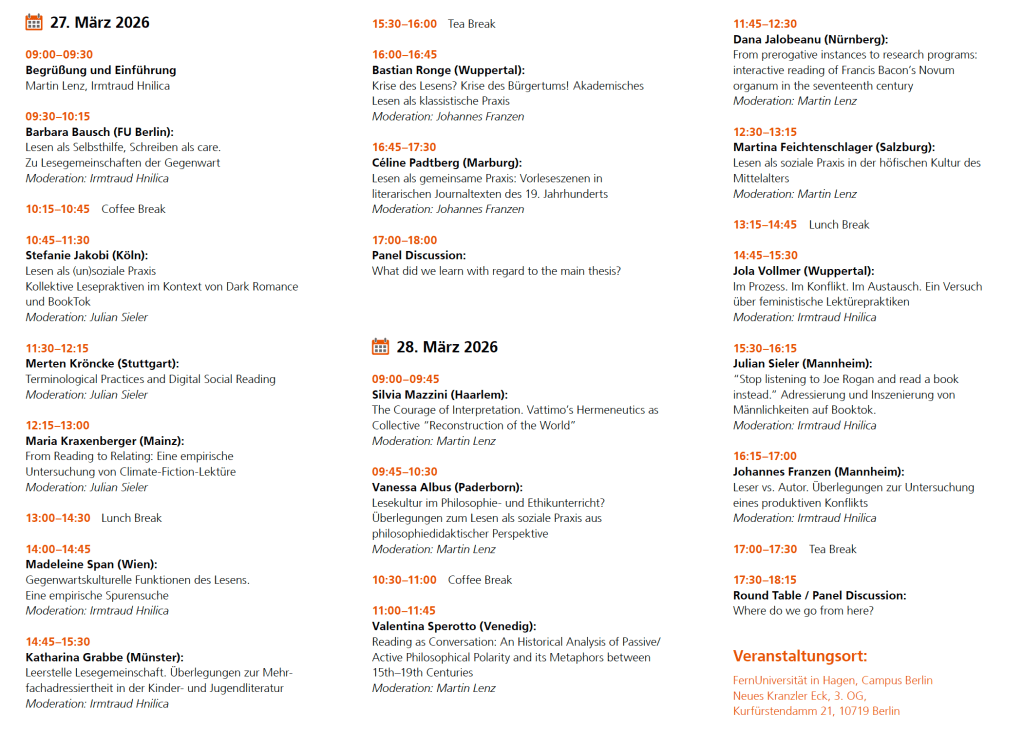

Following the CfP in October 2025, I’m thrilled to announce the programme of the interdisciplinary workshop on Reading as a Social Practice, organized by Irmtraud Hnilica and myself. We are excited about the range of perspectives brought together in this event. Further information can be found below, and we would be happy to answer any questions.

According to a widely held consensus, we are currently experiencing a reading crisis. This workshop aims to take a step back from the rhetoric of decline and instead ask how reading itself can be conceptualized and examined from different disciplinary perspectives – particularly in philosophy and literary studies. As an initial approach, we propose that reading is shaped not only by the texts themselves or by individual readers, but significantly by the interactions among readers. The workshop brings together researchers who discuss reading as a social practice from historical, theoretical, and contemporary perspectives. In doing so, it opens a space for interdisciplinary exchange and lays the foundation for future collaborations and a shared network.

Hier finden Sie das Video meiner Antrittsvorlesung Lesen als soziale Praxis:Über den Objektivismus im Lesen von Texten, gehalten am 10. Dezember 2025 an der FernUniversität in Hagen. Falls das Video gerade nicht abgespielt werden kann, finden Sie hier die Originalversion auf der Webseite der FernUni. Eine englische Übersetzung des Texts finden Sie hier. Der Vorlesungstext findet sich unter dem Video.

Lesen als soziale Praxis: Über den Objektivismus im Lesen von Texten

Lassen Sie mich mit einer Frage an meine Kolleg*innen aus der Philosophie beginnen: Wie können wir ein ganzes Forscherleben mit einem Kapitel aus Aristoteles‘ Schriften verbringen und zugleich glauben, wir können die Lektüre einer studentischen Hausarbeit in rund zwei Stunden erledigen? Schließlich können doch beide Texte gleichermaßen enigmatisch sein. –

Ich habe die Frage mehrfach aufgeworfen und sehr interessante Antworten erhalten. Worüber die Frage wie auch die zahlreichen Rechtfertigungen meines Erachtens deutlichen Aufschluss geben, ist unsere Lesekultur. Der Unterschied wird natürlich gern mit Blick auf die Professionalisierung des Lesens gerechtfertigt. Nichtsdestotrotz sind wir als Gelehrte und Lehrende Vorbilder in den Fachkulturen. Schauen wir also genauer hin! Zumindest mit Blick auf die Textsorte (hier Aristoteles, dort eine studentische Arbeit) sollte es keine gravierenden Unterschiede geben: beides sind im weiten Sinne wissenschaftliche Texte. Der wirklich gravierende Unterschied liegt vielmehr in einem sozialen Faktor begründet, den man hier im Rekurs auf Miranda Fricker als epistemische Ungerechtigkeit bezeichnen könnte. Es sind nicht irgendwelche Eigenschaften des Textes, sondern bestimmte Vorannahmen der Gemeinschaft der Lesenden, die zu dieser Ungerechtigkeit führen. Diese Vorannahmen sind nicht einfach Ihre oder meine privaten Meinungen über Aristoteles, sondern sie sind in einer langen Geschichte strukturell verankert bzw. institutionalisiert, und zwar in Form einer bestehenden Kanonik, die sogenannte Klassiker vor Studierenden rangieren lässt.

Nun werden Sie vielleicht sagen: Naja, das gibt es. Gleichwohl sind solche Vorannahmen dem Vorgang des Lesens doch selbst äußerlich, gewissermaßen Kontext, Beiwerk, aber doch nicht zentral für die Auseinandersetzung mit einem Text. Der Text muss aufgrund ihm immanenter Merkmale gewissermaßen dekodiert werden und steht damit gleichsam für sich. Objektiv.

Dieser geradezu klassische Einwand ist durchaus typisch, nicht zuletzt in der Philosophie, aber auch in anderen Disziplinen, weshalb ich mich in meinem Vortrag wesentlich mit dessen Entkräftung beschäftigen möchte.

Allerdings geht es mir hier nicht darum, lediglich eine kleine Fehde auszutragen. Vielmehr halte ich die Frage nach dem, was Lesen ist, für eine fundamentale Frage der Philosophie. Überraschenderweise wird diese Frage, mit wenigen Ausnahmen, so gut wie nie in der Philosophie behandelt. Dabei ist das Lesen, zumal das genaue Lesen und Rekonstruieren von schriftlichen Texten ein, wenn nicht das Kerngeschäft der Philosophie. Fragt man aber Kolleg*innen, wie sie lesen, hört man oft – und das ist kein Witz – „ich lese halt einfach“. Es scheint mir aber ein großes Versäumnis zu sein, wenn man die Bedingungen des eigenen Tuns, also die Reflexivität im Lesen, nicht eigens betrachtet. Deshalb möchte ich nun – gemeinsam mit meiner Kollegin Irmtraud Hnilica – ein interdisziplinäres Langzeitprojekt über das Lesen als soziale Praxis entwickeln. Die These, dass das Lesen eine soziale Praxis sei, meint dabei genau das, was der gerade genannte Einwand bestreitet: Dass soziale Faktoren des Lesens eben kein Beiwerk, sondern zentral für das Lesen und die Entwicklung durchaus unterschiedlicher Lesekulturen sind.

Im Folgenden möchte ich daher erstens einen Blick auf unsere Lesekultur werfen, die den genannten Einwand insofern befördert, als sie Texte für etwas objektiv Gegebenes hält. Hier interessiert mich die Frage, wie kommt es eigentlich und seit wann ist es so, dass wir Texte für etwas objektiv Gegebenes halten. Aus dieser Frage wird sich zweitens ergeben, dass die unterstellte Objektivität der Texte eine Illusion ist. Drittens möchte ich skizzieren, was Lesen meines Erachtens ist. Damit Sie sich innerlich warmlaufen können, sage ich Ihnen aber schon jetzt, dass wir das Lesen vielleicht am besten verstehen, wenn wir es in Analogie zum Singen von Liedern betrachten, nämlich als ein zyklisches und ritualisiertes Tun. Es sind die Eigenschaften dieses sozialen Tuns, die objektivierend sind. Viertens möchte ich andeuten, wie die fortbestehende Illusion zu einer degenerativen Mechanisierung des Lesens führt. Abschließend möchte ich dann fragen, wie uns dieser Zugang beim Verständnis unserer und anderer Lesekulturen auch in der Praxis helfen könnte.

I. Zur Fundierung des Objektivismus in der Philosophie

Beginnen wir noch einmal mit dem Einwand, der Texte selbst als objektiv Gegebenes behandelt. Nehmen wir den Einwand ernst, so müssten sich zwischen den Texten eines Studierenden und des Aristoteles markante Unterschiede aufweisen lassen, die die unterschiedliche Mühe begründen. Noch bevor wir jedoch in die Texte selbst blicken können, wird uns die Vergangenheit, unsere Vergangenheit einholen. Ob wir wollen oder nicht, wir stehen in einer Tradition, die bestimmte Texte als sakral behandelt. Aristoteles gehört als Autor in diese Tradition, er galt fast 1000 Jahre lang als philosophus, als der Philosoph schlechthin. Den Versuch, die Texte dieses Autors als konsistente Äußerungen eines Genies zu lesen, wird man selbst bei seinen ärgsten Gegnern finden. Der Sakralisierung oder, etwas zurückhaltender, Kanonisierung von Aristoteles‘ und anderen Werken, folgt spätestens seit der sogenannten Aufklärung eine deutlich verschiedene Lesekultur. Gegen die umfassende Kommentarliteratur der Antike und des Mittelalters findet sich wiederholt und zunehmend emphatisch die Verdrängung genauen Lesens durch die Kultivierung des sogenannten Selbstdenkens. So heißt es etwa bei Schopenhauer:

„Wann wir lesen, denkt ein Anderer für uns: wir wiederholen bloß seinen mentalen Proceß. Es ist damit, wie wenn beim Schreibenlernen der Schüler die vom Lehrer mit Bleistift geschriebenen Züge mit der Feder nachzieht. Demnach ist beim Lesen die Arbeit des Denkens uns zum größten Theile abgenommen. Daher die fühlbare Erleichterung, wenn wir von der Beschäftigung mit unsren eigenen Gedanken zum Lesen übergehn. Eben daher kommt es auch, daß wer sehr viel und fast den ganzen Tag liest, dazwischen aber sich in gedankenlosem Zeitvertreibe erholt, die Fähigkeit, selbst zu denken, allmälig verliert …“ (1851, § 291)

Interessanterweise ist Schopenhauers Pessimismus gegenüber dem Lesen von ähnlichen Sorgen motiviert, wie heutige Ermahnungen gegen social media, in denen sich zugleich die Behauptung vom Verfall unserer Lese- und Denkfähigkeit Bahn bricht. Wenn Schopenhauer Recht behielte, sollten wir das Lesen vielleicht lieber lassen, oder? Doch gerade mit der Unterstellung, der Text enthalte die Gedanken anderer, die wir im Lesen bloß nachvollziehen, wird der Objektivismus in Bezug auf Texte gefestigt. So gelten bestimmte Texte als schädlich. Bereits im ausgehenden 18. Jahrhundert hatte man gerade in Deutschland gegen die „Lesesucht“ gewettert, wobei besonders Jugendliche und Frauen zu den „Risikogruppen“ zählten. Gleichzeitig entfaltete sich in den theologischen und historischen Disziplinen die historisch-kritische Methode.

Und in der Philosophie entwickelte sich neben einer methodisch fundierten Kanonisierung von Klassikern, namentlich durch Autoritäten wie Kuno Fischer, zu Beginn des 20. Jahrhunderts eine dezidierte Renaissance der Bemühungen der frühneuzeitlichen Royal Society um die Etablierung einer Idealsprache für die Wissenschaften, die einen entsprechende Texte als objektive Bezugssysteme zur Weltbeschreibung verspricht.

Eine Eigenart, die wir bis heute mit dem frühen 20. Jahrhundert teilen, ist die Idee, dass man schriftliche Texte rational rekonstruieren könne, indem man Argumente von historischem und rhetorischem Beiwerk trenne. So gelangt man von der Textoberfläche gleich zur Tiefenstruktur, kann die logische Form notieren und die Kernsätze in Prämissen und Schlussfolgerungen umformulieren. Diese Idee suggeriert natürlich, dass das Argument im Text drinsteckt und dass man es dort – nach einführender Unterweisung – suchen kann. Dementsprechend kümmert sich auch ein Großteil der gegenwärtigen Philosophiedidaktik nicht um das Lesen selbst, sondern um die Analyse von Argumenten. Inzwischen hat die Welle des so verstandenen Critical Thinking auch außerhalb der Philosophie all jene erfasst, die irgendwelche Kompetenzen lehren wollen.

Wie man Argumente analysiert, sollte man natürlich lernen, aber man sollte wissen, was man da genau tut. Man bietet eine bestimmte Übersetzung durch Auslassung und Substitution an. Man behauptet dabei aber einerseits, dass das Argument im Text steckt, andererseits aber, dass das Argument ohne Übersetzung unsichtbar bleibt. Gerade Anfängern wird dabei oft suggeriert, dass es hier eine korrekte Rekonstruktion gibt.

Schauen wir uns das aber mal an. Nehmen wir zur Illustration mal den berühmten letzten Satz aus Wittgensteins Tractatus Logico-Philosophicus: Wovon man nicht sprechen kann, darüber muss man schweigen.

Diese Deutungen widerstreiten einander zwar, lassen sich aber nicht nur durch den zitierten Satz, sondern auch durch die Kontexte des Tractatus und der späteren Schriften validieren.

Wenn Sie einmal gesehen haben, wie viele widerstreitende Rekonstruktionen es selbst von weiteren klassischen Texten gibt, werden Sie stutzig sein, was die Objektivität von Texten angehet. Es ist klar, dass die Analyse von entsprechenden Argumenten hier wesentlich auf einer Verständigung zwischen logisch geschulten Leser*innen beruht, bei der der Original-Text selbst oft als hinderlich gilt. Statt sich aber nun darauf zu konzentrieren, wie sich die Lektüre durch diesen Aushandlungsprozess unter Lesenden gestaltet, tut man weiterhin so, als würde man an der Optimierung der Rekonstruktion eines Klassikers arbeiten. Was hier entsteht, könnte man ohne Weiteres als fan fiction bezeichnen.

Nimmt man das nun ernst und nicht lediglich als Polemik, ist klar, dass sich die Philosophie in bestimmten Schulen durchaus in Nachbarschaft zu ganz anderen Literaturgattungen befindet. Aber auch das Insistieren auf philologisch strenger Lektüre nimmt in der Regel den Text als Quelle der daraus gewonnenen Doktrinen und Denkformen, wie dies auch durch die generelle Unterscheidung von Primär- und Sekundär-Texten suggeriert wird. Insgesamt kann diese Annahme in Bezug auf das Lesen als Objektivismus bezeichnet werden.

Wie aber sollte man diesen Objektivismus unserer Lesekultur verstehen?

II. Der Text als Möglichkeit von Lesarten in Deutungsgemeinschaften

Unter Objektivismus verstehe ich die Annahme, dass das, was wir dem Text zu entnehmen meinen, im Text selbst zu finden sei. Das ist einerseits eine korrekte Annahme, denn all die Leser*innen werden Ihnen bestätigen, dass sie ihre Interpretationen aus den Texten gewinnen. Natürlich muss man hier hinzufügen, dass ein Text in der Tat als eine Verkettung von Propositionen gelesen werden kann, die dekodierbar sind und auf deren Präsenz sich die meistern Leser*innen werden einigen können.

Andererseits ist es aber eine irreführende Annahme, was man schon daran sieht, dass über Interpretationen endlose Streitigkeiten bestehen. Denken Sie nur an das Wittgenstein-Zitat. Wenn dies zutrifft, ist der Objektivismus einerseits korrekt, andererseits aber irreführend. Einerseits korrekt, andererseits irreführend? Widerspreche ich mir hier gerade selbst? – Ich bitte um Geduld. Um den Widerspruch aufzulösen, muss man sehen, dass ein Text nicht mit seiner Lektüre identisch ist. Der Text ist eine Möglichkeit zur Lektüre, eine Lektüre hingegen ist die Realisierung im Text liegender Möglichkeiten. Im Anschluss an James Gibson kann man von Affordanzen sprechen, die der Text bietet. Wie Sarah Trasmundi und Lukas Kosch gezeigt haben, bietet Ihnen ein Text verschiedene Handlungsmöglichkeiten bzw. Lesemöglichkeiten. Welche Möglichkeiten Sie in Ihrer Lektüre nun konkret ergreifen, das hängt von weiteren Faktoren ab. Diese Faktoren sind – so meine These – vorwiegend sozial. Konkret heißt dies: Ob Sie eine Text so oder so lesen, welche Bedeutung sie dem Text also entnehmen, hängt von Ihren Interaktionen mit anderen Leser*innen ab.

Das merken Sie freilich meist gar nicht, weil Sie – zumal in unserer Lesekultur – oft allein mit einem Text sind. Aber im Grunde waren Sie nie allein mit einem Text: Als Kind wurde Ihnen, hoffentlich, vorgelesen. Als Schüler*in wurden Sie dauernd von anderen korrigiert. Und jetzt. Jetzt, da Sie erwachsen sind, hören Sie Stimmen. Nicht im pathologischen Sinne. Die Interaktionen mit anderen Leser*innen sind einfach meist implizit, gewissermaßen zu Gewohnheiten, ja Traditionen geronnen. Eine Gruppe, die bestimmte Interpretationsgewohnheiten teilt, möchte ich Stanley Fish folgend Deutungsgemeinschaft nennen. Fish verortet die Bedeutungsaushandlung für Texte in entsprechenden „interpretive communities“. Sie haben gelernt, Speisekarten zu lesen, und Sie wissen, was Sie mit Ihnen tun können. Und Sie werden eine Speisekarte nicht mit einem Gedicht verwechseln, oder? Noch bevor sie den Aufsatz auf Ihrem Tisch überfliegen, wissen Sie, dass er ein Argument enthält, weil es ja ein philosophischer Text ist – und enthielte er kein Argument, so wäre es ja gar kein philosophischer Text. So will es die Tradition Ihrer Deutungsgemeinschaft.

Es liegt also gerade an der Tatsache, dass ein Text nicht mit seiner Lektüre identisch ist, wohl aber Möglichkeiten zu Lektüren bietet, dass wir gern dem Objektivismus verfallen. Die Gepflogenheiten bestimmter Deutungsgemeinschaften werden so als Eigenschaften des Textes selbst ausgewiesen. Der Objektivismus mit Blick auf die Texte selbst ist aus dieser Perspektive eine Illusion.

Nun werden Sie vielleicht sagen: „Ach, ist doch nicht so schlimm. Ob ich die Gepflogenheiten nun in der Gemeinschaft oder im Text selbst zu finden glaube, ist doch egal, die Hauptsache ist: ich finde sie!“ – Das mag freilich sein. Zum Problem wird es aber, wenn Sie nach etwas suchen, es aber an der falschen Stelle vermuten.

III. Lesen funktioniert wie Singen

Wie also funktioniert Lesen? Natürlich lässt sich viel dazu sagen. Aber wesentliche Punkte lassen sich verstehen, wenn man Lesen in Analogie zum Singen von Liedern betrachtet. Schauen wir zunächst nochmals auf den Objektivismus.

Schriftliche Texte haben zwei wichtige Eigenschaften, so scheint es, die wir auch objektiven Gegenständen zuschreiben: Konstanz bzw. Wiederholbarkeit und Teilbarkeit. Wenn ich ein Buch zuschlage, so scheint es, ist der Text konstant dort, zumindest kann ich ihn wiederholt lesen. Und wenn ich Ihnen das Buch ausleihe, so scheint es, können Sie denselben Text wie ich lesen. So scheinen diese Eigenschaften der Wiederholbarkeit und Teilbarkeit dem Text selbst eigen zu sein.

Bei genauerem Hinsehen verhält sich die Sache aber anders. Die genannten Vorzüge stellen sich nämlich auch bei einem denkbar ungegenständlichen Tun wie dem Singen ein.

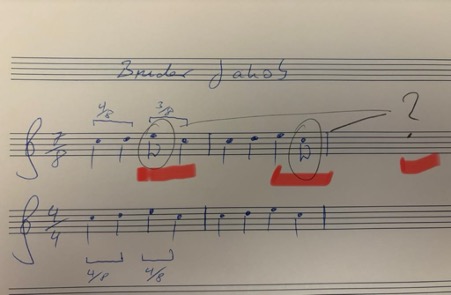

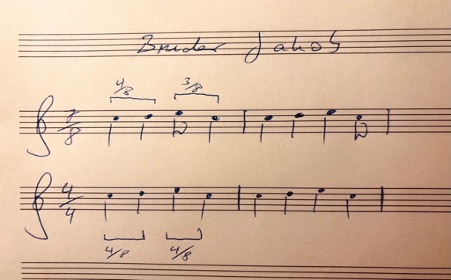

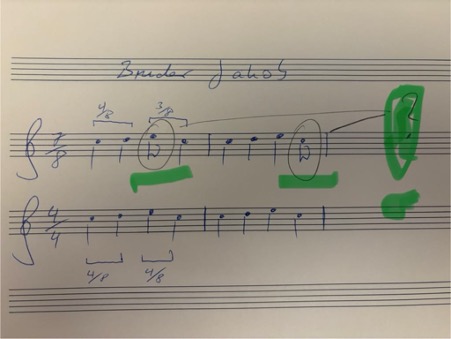

Hören Sie mal: https://www.youtube.com/watch?v=eV-7EJkmsd0&list=PLiAJPLHMA7zAKmiFzDc_gruaa6kpt_DhN&index=7

Sie hören gerade Bruder Jacob bzw. Frère Jacques! Die meisten von Ihnen werden es nicht nur kennen, sondern ohne Mühe auch dann singen können, wenn sie um 3 Uhr nachts aus dem Schlaf gerissen werden. Auch hier gilt, das Lied ist Ihnen konstant erinnerlich und Sie können das Singen wiederholen. Überdies können auch andere dasselbe Lied singen. Und Sie würden es sogar dann erkennen, wenn es jemand falsch intonierte.

Das Lied hat also eine gewisse Objektivität, es ist von unserer spontanen Performance und von unserer Vorstellung unabhängig. Aber es hat diese Objektivität nicht, weil es irgendwo schriftlich fixiert wäre. Wenn Sie genau hinhören, merken Sie, dass das Lied hier erstens im 7/8-Takt statt im üblichen 4/4-Takt gespielt wird und dass es zweitens viel reicher harmonisiert ist. Das relationale Objekt ist aber kein Text, da ist kein Gegenstand, auf den Sie zeigen könnten. Dennoch scheint es ein objektiv gegebener Bezugspunkt zu sein. Was die Objektivität bei aller Varianz stiftet, ist also nicht die Gegenständlichkeit, sondern es sind diese zwei Eigenschaften: Wiederholbarkeit und Geteiltsein mit anderen. Das Geteiltsein bzw. die Wiederholbarkeit durch andere spielt hier die tragende Rolle! Warum? Weil ich in meinen Wiederholungen ohne soziales Geteiltsein nicht korrigiert werden könnte. Allein könnte ich jeden Unsinn für eine Wiederholung halten.

Erst im Einklang mit anderen kann es so etwas wie eine richtige bzw. echte Wiederholung geben. (Dies ist die Konsequenz, die ich aus Wittgensteins Privatsprachenargument ziehe.) Erst wenn Sie affirmieren, dass es sich auch bei der 7/8-Version um „Bruder Jakob“ handelt, gilt es auch als „Bruder Jakob“.

Genau aus diesem Grund gilt beim Singen wie beim Lesen: Nicht die Gegenständlichkeit, sondern die geteilte Wiederholung, sprich die korrekte Wiederholung stiftet Objektivität. Was das Singen mit dem Lesen hier gemeinsam hat, ist, dass es in eine lange Geschichte sozialen Miteinanders eingebettet ist.

Wie das Lesen, erfuhren Sie das Singen vielleicht zunächst dadurch, dass Ihnen vorgesungen wurde, dass es wiederholt, verkörpert, gemeinsam und vielleicht sogar ritualisiert geschah. Ebenso wie Ihnen zunächst wiederholt in typischen Situationen vorgelesen wurde: das Lesen und Hören waren verkörpert, vielleicht in einem Bett mit einem Buch und Bildern. Gemeinsam, also vielleicht durch Ihre Mutter, Ihren Vater, vielleicht mit anderen Kindern. Und vielleicht als ein Ritual beim Zubettgehen, das Ihre Erwartungen geprägt und den Abend strukturiert hat. Es, das Singen wie das Lesen, ist Ihnen gewissermaßen als ein Ritual eingeschrieben. So lernen wir das. In diese biographischen Geschichten, nicht nur in eine abstrakte Tradition, ist das Lesen eingebettet.

Meines Erachtens sind es nun genau diese Faktoren, und besonders die Wiederholbarkeit und die Gemeinsamkeit, die dem Gelesenen Objektivität verleihen, zu der der Text ähnlich wie das Lied ‚an sich‘ aber allenfalls eine Möglichkeit bietet.

Was nun liefert uns diese Analogie mit dem Singen? Erstens verdeutlicht sie uns, wie wir mit Blick auf die Ojektivitätsfaktoren Wiederholbarkeit und Teilbarkeit Texten selbst eine Objektivität zuerkennen, die wir eigentlich aus der sozialen Einbettung gewinnen; anders als bei Texten, sind bei Liedern nämlich gar keine Objekte auszumachen. Zweitens verweist sie uns auf entscheidende soziale Orte und Situationen: Wenn wir uns also ernsthaft mit dem Lesen beschäftigen wollen, mit dem Aushandeln von Bedeutungen unter Lesenden, so müssen wir an die Orte gehen, wo dergleichen faktisch geschieht. Entsprechend wäre eine philosophische Beschäftigung mit einem Text des 18. Jahrhunderts darauf verwiesen, sich mit der Briefkultur sowie den Salons und überhaupt mit der Etablierung des Gesprächs als Ort des Denkens zu beschäftigen. Während es für viele von uns ganz selbstverständlich ist, dass wir Gespräche über Texte führen, ist diese Form, also das Gespräch, doch selbst irgendwann entstanden und hat – so eine meiner Folgerungen aus meiner Leitthese – die entscheidende Rolle für die Bedeutung bzw. den Gebrauch von Texten bestimmter Genres. Das Gespräch ist, neben dem Peer-review-Verfahren ein entscheidender Ort, in dem sich nicht zuletzt die philosophische Lesekultur ereignet. Entsprechend können Sie die unterschiedlichen Wittgenstein-Deutungen in ganz unterschiedlichen Diskussionen bzw. Communities verorten: die positivistische Deutung im Wiener-Kreis, die mystische bei Elisabeth Anscombe, die therapeutische etwa bei Peter Hacker.

Die Grundidee ist also: Die Texten zugeschriebene Objektivität ist eine Illusion, die durch (im Text liegende Affordanzen) aktualisierende Eigenschaften des Lesens suggeriert und in den Text zurückprojiziert wird. Lesen als soziale Praxis ist (wie singen) wiederholend, sozial divers verfügbar. Nicht der Text, sondern soziale Lektüre stiftet Objektivität.

So schreibt Suresh Canagarajah: „Meaning has to be co-constructed through collaborative strategies, treating grammars and texts as affordances rather than containers of meaning.

Das ist genau der Punkt, den ich auch zu machen versuche: Texte enthalten keine Bedeutungen, sondern bieten Affordanzen bzw. Möglichkeiten.

IV. Die Folgen der Illusion: Die Degeneration des Objektivismus zum mechanischen Lesen

Wenn das Gesagte zutrifft, so kann es auch sein, dass bestimmte Lesekulturen wieder verschwinden oder sich wandeln. Das bedeutet aber nicht zwingend, dass wir das Lesen verlernen, sondern vielleicht nur, dass sich die Art des Lesens und die Orte, an denen Bedeutungen ausgehandelt werden, ändern können. Das merkt man übrigens nicht nur mit Blick auf Technologien jüngeren Datums, sondern auch im täglichen Geschäft, insbesondere in der Lehre. Ich glaube aber, dass die noch stets verbreitete Illusion, Texte selbst seien objektiv, zu einer Degeneration in unserer Lesekultur führt. Und hier bin ich wieder bei meiner Eingangsbeobachtung, dass wir ein Leben mit einem Aristoteleskapitel, aber vielleicht nur zwei Stunden mit einer studentischen Arbeit verbringen.

Diese Praxis, die übrigens gerade auch mit einer zunehmenden Alphabetisierung, der sog. Massenuniversität und der gleichzeitig stagnierenden Zahl an Lehrenden zusammenhängt, könnte man sagen, wird zunächst als aus externen politischem Druck kommend erlebt – und doch richtet man sich zunehmend so in ihr ein, dass die Vorgaben für studentische Textproduktion – zumindest in den Niederlanden und Großbritannien – ihrerseits so schematisch sind, dass man tatsächlich nach 20 Minuten Lektüre urteilen zu können meint, ob die Anforderungen erfüllt sind. Eine solche Mechanisierung für das Schreiben und Lesen ist natürlich nur zu rechtfertigen, wenn man glaubt, dass Texte selbst objektive Entitäten sind, die dementsprechend entweder gut oder schlecht sind. Diese Mechanisierung ist übrigens keine Folge von ChatGPT. Vielmehr ist es umgekehrt so, dass eine sich dahin wandelnde Lesekultur konsequent einer solchen Technologie zu bedienen lernt.

Aus den Niederlanden weiß ich, dass die Mechanisierung des Lesens bereits in den Grundschulen greift, wo man von Beginn an begrijpend lezen unterrichtet, um Fragen nach der Textstruktur in Multiple-Choice-Tests abzuprüfen und sich denn zu wundern, dass die meisten jungen Leute keine Lust am Lesen haben.

Es scheint so, dass solche uninspirierten Vorbilder zu Leser*innen führt, die ihrerseits als Textproduzenten für Prüfungen Texte fabrizieren, die kaum gelesen werden. Warum also sollte man sie noch selbst schreiben? Warum selbst lesen?

All dies sind freilich Entwicklungen, die man zumindest an den Universitäten nicht unabhängig von der Einführung des New Public Management in den 1980er Jahren beschreiben kann. (Was nützt es, einem Studierenden zu sagen, man möge in den Text schauen, um ihn zu verstehen, wenn es um diesen Text herum kaum Interesse daran gibt. Nein, nicht etwa sind die Universitäten sind Elfenbeintürme; vielmehr haben sich kulturelle Wüsten um die Universitäten herum gebildet, in denen wir vor allem Stakeholder*innen statt Deutungsgemeinschaften sehen. Aber diese Kritik ist alt und auch ein bisschen einseitig.)

Denn natürlich gibt es sie, die Orte, an denen nach wie vor die Bedeutung von Texten ausgehandelt wird. Wir finden Sie auf Literatur- und gar Philosophiefestivals, in den sozialen Medien unter Booktok, in oft studentisch organisierten Lesegruppen und natürlich auch in unseren Lehr- und Forschungsveranstaltungen. Hier ist das Lesen manchmal so explizit sozial, dass es geradezu performt wird. Auch das ist natürlich nicht ganz neu. Wenn wir uns für die Grundlagen des Lesens interessieren, müssen wir an diese Orte gehen.

Besonders interessant für unsere Lesekultur ist, denke ich, dass Large Language Models nicht nur das Vertrauen in die Authentizität, sondern auch in die Objektivität von Texten erodieren lassen. Wir erleben hier eine gewaltige Desakralisierung des Textes. Denn anders als die hinter biblischen Texten vermutete göttliche Autorität, vermuten wir nun ständig einen täuschen Dämon. Dementsprechend glaube ich, dass das akademische Aufbegehren gegen diese Desakralisierung auch ein Aufbegehren gegen das Sterben der Illusion ist, dass Texten selbst Qualität inhärent sei.

Diese Desakralisierung zu benennen heißt nicht, die großen Versprechungen einschlägiger Produzenten von KI-Produkten zu schlucken. Aber wir können diese Technologie auch dazu nutzen, uns selbst zu sensibilisieren dafür, dass es nicht die Texte selbst sind, sondern unser Lesen, unser Gesang, unsere Rituale sind, die Bedeutungen stiften und zu etwas Geteiltem machen.

V. Ein paar Schlussfolgerungen

Was nun folgt aus diesen Einsichten für die Praxis? Wie können wir durch solche Erkenntnisse die Lesepraxis verbessern? Zunächst möchte ich daran erinnern, dass unser Forschungsprojekt erst am Anfang steht. Aber wenn die Bedeutung von Texten im Lesen wesentlich durch die Interaktionen zwischen Leser*innen erschlossen ist, dann hilft es, nicht in den Text selbst zu starren, sondern sich zunächst stets zu fragen: Was erwarte ich von diesem Text? Was unterstelle ich, das er mir sagen soll? Soll er mir ein Argument für etwas liefern? Was mache ich, wenn der Text die Erwartung nicht erfüllt? Soll ich demütig denken, dass ich zu dumm dafür bin? Dass ich nicht zu dem Club der Leser*innen gehöre, die von sich sagen, dass sie solche Texte verstehen oder gar lieben? Und warum liegt dieser Schinken überhaupt auf meinem Schreibtisch oder in meinem Adobe Reader?

Wenn man sich durch diese Fragen genug verwirrt hat, kann man tatsächlich in den Text blicken und schauen, was da geschrieben steht, ohne gleich das Argument zu suchen. Die Leute sagen ja immer, man solle nicht nur lesen, sondern gründlich lesen: Was heißt das aber, gründlich? Soll ich besonders viele Farben wählen, um die unverständlichen Passagen zu markieren? Im Ernst: Diese Anweisung ist ähnlich hilfreich wie zu sagen, man solle sich konzentrieren. Wie mache ich das? In die Luft gucken und die Augen klug verdrehen? – Woran merkt man denn, dass man sich hinreichend gut konzentriert hat? Wenn man sagen kann, was die Gesprächspartnerin freundlich abnickt? Mit einer Speisekarte kann ich bestellen, mit einem Gedicht kann ich gut klingen, aber was mache ich mit einem philosophischen Text? Wann habe ich da was verstanden? Noch immer können wir das nur im Gespräch sehen. – Reicht das?

Nun, eine grundsätzliche Einsicht, die aus der meiner lesetheoretischen Betrachtung folgt, ist, dass ein philosophischer Text Möglichkeiten bzw. Affordanzen und mithin stets verschiedene Möglichkeiten zur Lektüre bietet. Es ist ein insbesondere in der analytischen Philosophie verbreiteter Mythos der Vollständigkeit, dass sich alle impliziten Möglichkeiten schlicht explizit machen lassen. Eine solche Vollständigkeit widerspricht aber der notwendigen Offenheit bzw. Unterbestimmtheit in Texten. Denken Sie gerne wieder an die Wittgenstein-Sentenz. Eine weitere, sehr einprägsame Illustration dafür ist die Hasenente, die der Möglichkeit nach eben beides bleibt. Mein Projekt wäre nun,, nicht das eine wahre Argument zu rekonstruieren, sondern unterschiedliche und ggf. einander widerstreitende Möglichkeiten offenzulegen. Demnach muss man akzeptieren, dass der Text verschiedene Deutungen ermöglicht, die in den unterschiedlichen Deutungsgemeinschaften erst gewonnen werden.

Für gewöhnlich entwickeln Philosoph*innen an dieser Stelle eine typische Angst vor dem Relativismus. Doch wie bereits Stanley Fish festgehalten hat, geht es bei einer Betonung der Möglicheiten nicht um eine relativistische Position, sondern um Pluralität. Eine solche Plurailtät führt aber keineswegs in Beliebigkeit. Was nun aber sind dann die Grenzen für diesen Möglichkeitsraum? Zunächst gibt es natürlich propositionale Grenzen: Sie können nicht sagen, ein Text behauptet Nicht-P, wenn er explizit P behauptet. Es sei denn, Sie erblicken Anzeichen für Ironie. Schon hier wird die Sache mit den Grenzen wieder schwierig; und Sie werden sich eben so oder so entscheiden. Darüber hinaus gibt es situationsbezogene Angemessenheitsbedingungen. Wenn jemand nach dem Weg zum Bahnhof fragt, ist es nicht angemessen, frei nach Robert Frost mit dem Sinnieren über weniger ausgetretene Pfade zu antworten. Ebenso wie man auf einer Antrittsvorlesung nicht Bruder Jacob anstimmen sollte. Oder doch? Natürlich können wir mit Konventionen brechen. So ist es zum Beispiel offen, ob Sie das Lied im Vierviertel- oder im Sieben-Achtel-Takt singen oder aber mit Sus-Akkorden psychedelisch reharmonisieren. Die Konvention gibt Ihnen etwas, mit dem Sie spielen bzw. singen können.

Dementsprechend trifft Alva Noë einen zentralen Punkt, wenn er philosophische Texte mit Partituren für das Denken vergleicht, die man auch sehr unterschiedlich interpretieren kann:

„What the philosopher establishes in their labors are not truths or theses, but rather scores, scores for thinking with. … The philosophy lives for us like a musical score that we – students and colleagues, a community – can either play or refuse to play, or wish that we could figure out how to play, or, alternatively, wish that we could find a way to stop playing.”

Ich würde nur ergänzen, dass in Analogie zur musikalischen Notation philosophische Texte eine Vielzahl von Interpretationen zum Leben erwecken kann. Hier haben wir nicht nur eine Hasenente, sondern einen ganzen Zoo mit möglichen Aspektwechseln.

Nun, das mag ja alles sehr schön klingen. Man darf aber nicht vergessen, dass Interpretationen nicht nach Belieben, sondern v.a. im Blick auf soziale Zugehörigkeit gewählt werden. Wenn Sie eine Interpretation wählen, gehören Sie vielleicht in einen Club, der gerade wenig in Mode ist. Das Problem mit meinen Auskünften ist also, dass sie auf ganz unterschiedliche Weise genommen werden können. Gerade Akademiker*innen fürchten Reputationskosten; deshalb gestehen sie ihr Unverständnis nur sehr ungern ein. „Diesen Text verstehe ich nicht“ heißt ja meist eher, „Der Autor ist zu dumm, es mir gut zu erklären.“ Wenn man hingegen ernsthaft und aufrichtig Unverständnis äußern kann, ist man wirklich einen Schritt weiter. Aber solche Demut muss man sich gewissermaßen leisten können. Deshalb reicht es nicht, das Gespräch zu suchen, man muss seine Scham überwinden. Man kann auch nicht gut singen lernen, wenn man sich allzu sehr vor falschen Tönen fürchtet.

Irgendwann aber kann man wirklich beginnen, die dunklen Stellen zu nennen und sich zu fragen, wo genau man aus welchem Grund nicht mehr weiterkommt. Reflektierte Konfusion ist dann ein genuiner Gesprächseinstieg. Denn wenn ein Text die Möglichkeit bietet, ihn zu verstehen, dann auch die, ihn nicht zu verstehen.

Let me begin, once more, with a question for my colleagues in philosophy: How can we spend a lifetime on a chapter in Aristotle and think we’re done with a student essay in two hours? Both can be equally enigmatic.

I have raised this question several times and received some very interesting answers. What the question, as well as the numerous justifications, clearly reveal, in my opinion, is the state of our reading culture. The difference between the amounts of time spent on such texts is, of course, often justified with regard to the professionalization of reading. Nevertheless, we as scholars and teachers are role models in our respective disciplines. So let’s take a closer look! At least with regard to the type of text (a piece of Aristotle’s work and a student paper), there should be no significant differences: both are, in a broad sense, scholarly texts. The truly significant difference lies instead in a social factor, which, following Miranda Fricker, could be described as an epistemic injustice. It is not any particular characteristics of the text, but rather certain presuppositions held by the community of readers that lead to this injustice. These presuppositions are not simply your or my private opinions about Aristotle, but are structurally embedded or institutionalized in a long history, namely in the form of an existing canon that prioritizes so-called classics over students.

Now you might say: Well, that may well be so. However, such presuppositions are external to the act of reading itself, contextual, incidental, so to speak, but not central to engaging with a text. The text, due to its inherent characteristics, must be decoded, so to speak, and thus stands, as it were, on its own. Objectively.

This almost classic objection is quite typical, not least in philosophy, but also in other disciplines, which is why I intend to focus primarily on refuting it. However, my aim here is not merely to engage in a petty feud. Rather, I consider the question of what reading is to be a fundamental question of philosophy. Surprisingly, with few exceptions, this question is almost never addressed in philosophy. Yet reading, especially the careful reading and reconstruction of written texts, is certainly among the core businesses of philosophy. But if you ask colleagues how they read, you often hear—and this is no joke—”I just read.” It seems to me, however, a major oversight not to specifically consider the conditions of one’s own activity, that is, the reflexivity inherent in reading. In keeping with my long-term project with Irmtraud Hnilica, my thesis that reading is a social practice means precisely what the aforementioned objection denies: that social factors in reading are not merely incidental, but central to reading and the development of quite different reading cultures.

In the following, I would therefore like to first take a look at our reading culture, which promotes the aforementioned objection insofar as it considers texts to be something objectively given. Here, I am interested in the question of how and since when we have considered texts to be something objectively given. Secondly, this question will reveal that the assumed objectivity of texts is an illusion. Thirdly, I would like to outline what I consider reading to be. To help you prepare, I’ll tell you now that we might best understand reading by considering it in analogy to singing songs, namely as a cyclical and ritualized activity. It is the characteristics of this social activity that produce objectivity. Fourthly, I would like to suggest how the persistent illusion leads to a degenerative mechanization of reading. Finally, I would like to ask how this approach could help us in practice to understand our own and other reading cultures.

1 On the Foundation of Objectivism in Philosophy

Let’s begin again with the objection depicting texts themselves as objectively given. If we take this objection seriously, then there should be striking differences between the texts of a student and those of Aristotle, differences that justify the varying effort required. However, even before we can look into the texts themselves, the past, our very own past, will catch up with us. Whether we like it or not, we are standing in a tradition that treats certain texts as sacred. Aristotle, as an author, belongs to this tradition; for almost 1000 years he was considered philosophus, the philosopher par excellence. Even his fiercest opponents attempt to read his texts as the consistent pronouncements of a genius. The sacralization, or, to put it more cautiously, canonization, of Aristotle’s and other works has been followed, at least since the Enlightenment, by a distinctly different reading culture. Against the comprehensive commentary literature of antiquity and the Middle Ages, there is a recurring and increasingly emphatic push for the suppression of close reading by the cultivation of so-called independent thought. For example, Schopenhauer* writes:

“When we read, someone else is thinking for us: we merely repeat his mental process. It is like when the student learns to write with the pen going over the pencil marks of the master. So when one reads, most of the thought-activity has been removed from him. Hence the palpable relief we perceive when we stop to take care of our own thoughts and move on to reading. While we read, our head is truly an arena of unknown thoughts. But if we take away these thoughts, what’s left? So it happens that those who read a lot and for most of the day, in the meantime relaxing with a carefree pastime, little by little lose the ability to think – like one who always rides a horse and eventually forgets how to walk. This is the case of many scholars: they have read to the point of becoming fools.” (Schopenhauer 1851, § 291)

Interestingly, Schopenhauer’s pessimism regarding reading is motivated by concerns similar to today’s warnings against social media, which simultaneously assert the decline of our reading and thinking abilities. If Schopenhauer were right, perhaps we should give up reading altogether, shouldn’t we? But it is precisely the assumption that a text contains the thoughts of others, which we merely follow through reading, that solidifies objectivism in relation to texts. Not surprisingly, certain texts were considered harmful. As early as the late 18th century, there was much criticism of “Lesesucht” (reading mania), particularly in Germany, with young people and women being considered “at-risk groups” in particular. At the same time, the historical-critical method was established in theological and historical disciplines. And in philosophy, alongside a methodologically grounded canonization of classics, notably by authorities like Kuno Fischer, the beginning of the 20th century saw a distinct renaissance of the efforts of the early modern Royal Society to establish an ideal language for the sciences, promising corresponding texts as objective reference systems for describing the world.

One characteristic we still share with the early 20th century is the idea that written texts can be rationally reconstructed by separating arguments from historical and rhetorical embellishments. This allows one to move directly from the surface of the text to its deep structure, to note the logical form, and to reformulate the core statements into premises and conclusions. This idea naturally suggests that the argument is embedded in the text and that one can search for it there—after some introductory instruction. Accordingly, much of current philosophy didactics is concerned not with reading itself, but with the analysis of arguments. Meanwhile, the wave of Critical Thinking, understood in this way, has also spread beyond philosophy to all those who want to teach any kind of competence.

Of course, one should learn how to analyze arguments, but one should also know precisely what one is doing. One is offering a specific translation through omission and substitution. On the one hand, it is claimed that the argument is contained within the text, but on the other hand, that the argument remains invisible without translation. Beginners are often led to believe that there should be one correct reconstruction.

Let’s take a closer look. To illustrate this, let’s consider the famous last sentence from Wittgenstein’s Tractatus Logico-Philosophicus: “Whereof one cannot speak, thereof one must be silent.”

– Firstly, you can interpret the sentence positivistically: as a restriction to what can be meaningfully said by the natural sciences. (In this case, you interpret the “must” as descriptive.)

– Secondly, you can interpret the sentence mystically and ethically: as a prioritization of the unspeakable as what is truly important. (In this case, you interpret the “must” as normative.)

– Thirdly, you can interpret the sentence as self-contradictory and, in this sense, therapeutic: because it speaks precisely of something about which one cannot speak. (The “whereof” names something that is indicated as unspeakable in the reflexive pronoun “thereof”.)

These interpretations contradict each other, but can be validated not only by the quoted sentence, but also by the contexts of the Tractatus and later writings. Once you have seen how many conflicting reconstructions of this and other classics exist, you might be quite puzzled by the idea of textual objectivity. It’s clear that the analysis of relevant arguments relies heavily on communication between logically trained readers, where the original text itself is often seen as an obstacle. Instead of focusing on how the negotiation process between readers shapes the reading experience, however, the approach remains one of optimizing the reconstruction of a classic. What emerges could easily be described as fan fiction.**

If we take this seriously and not merely as polemics, it becomes clear that philosophy, in certain schools of thought, is indeed in close proximity to entirely different literary genres. But even the insistence on philologically rigorous reading generally takes the text as the source of the doctrines and modes of thought derived from it, as is also suggested by the general distinction between primary and secondary texts. Overall, this assumption regarding reading can be described as objectivism. But how should we understand this objectivism in our reading culture?

2 The Text as a Possibility of Readings in Interpretive Communities – Objectivism as an Illusion

By objectivism, I mean the assumption that what we believe we have gleaned from the text is actually found within the text itself. On the one hand, this is a correct assumption, because all readers will confirm that they derive their interpretations from the texts. Of course, it must be added here that a text can indeed be read as a chain of propositions that are decodable and whose presence most readers will be able to agree upon. On the other hand, however, it is a misleading assumption, as can be seen from the fact that there are endless disputes about interpretations. Just think of the Wittgenstein quote. If this is true, then objectivism is, on the one hand, correct, but on the other hand, misleading. On the one hand, correct, on the other hand, misleading? Am I contradicting myself here? – Please bear with me. To resolve this apparent contradiction, we must recognize that a text is not identical to its reading. The text is a possibility for reading, while reading is the realization of the possibilities inherent in the text. Following James Gibson, we can speak of affordances that the text offers. As Sarah Bro Trasmundi and Lukas Kosch have shown, a text offers you various possibilities for action or reading. Which possibilities you ultimately choose in your reading depends on further factors. These factors are—so I argue—primarily social. Specifically, this means that whether you read a text in one way or another, and thus what meaning you derive from it, depends on your interactions with other readers.

Of course, you usually don’t even notice this because—especially in our reading culture—you are often alone with a text. But fundamentally, you were never truly alone with a text: As a child, you were, hopefully, read to. As a student, you were constantly corrected by others. And now, now that you’re an adult, you hear voices. Not in a pathological sense. The interactions with other readers are simply mostly implicit, solidified into habits, even traditions. Following Stanley Fish, I would like to call a group that shares certain interpretive habits an interpretive community. Fish locates the negotiation of meaning for texts within corresponding “interpretive communities.” You’ve learned to read menus, and you know what to do with them. And you wouldn’t mistake a menu for a poem, would you? Even before you skim the essay on your table, you know that it contains an argument because it’s a philosophical text—and if it didn’t contain an argument, it wouldn’t be a philosophical text at all. That’s how the tradition of your interpretive community dictates it.

(Taken from this quite insightful podcast.)

It is precisely the fact that a text is not identical with its reading, but rather offers possibilities for reading, that makes us prone to objectivism. The customs of certain interpretive communities are thus presented as properties of the text itself. From this perspective, objectivism with regard to the texts themselves is an illusion.

Now you might say: “Oh, it’s not so bad. Whether I believe I find the customs in the community or in the text itself is irrelevant; the main thing is that I find them!” – That may well be true. However, it becomes a problem when you are looking for something but expect to find it in the wrong place.

3 What Really Generates Objectivity – Reading, just like Singing

So how does reading work? Of course, much can be said about it. But essential points can be understood by considering reading in analogy to singing songs. Let us first return to objectivism.

Written texts have two important properties, it seems, which we also attribute to objective objects: constancy or repeatability and shareability. When I close a book, it seems the text is there constantly, or at least I can read it repeatedly. And when I lend you the book, it seems you can read the same text as me. Thus, these properties of repeatability and shareability seem to be inherent in the text itself.

On closer inspection, however, the matter is different. The aforementioned advantages also arise in a seemingly non-representational activity like singing. Listen to this:

You just heard Bruder Jakob (Brother John, Frère Jacques)! Most of you will not only know it, but could also sing it effortlessly even if you were jolted awake at 3 a.m. Again, the song is consistently in your memory, and you can repeat it. Moreover, others can sing the same song. And you would even recognize it if someone sang it off-key or changed the rhythm.

The song thus possesses a certain objectivity; it is independent of our spontaneous performance and our imagination. But it doesn’t possess this objectivity because it is written down somewhere. If you listen closely, you’ll notice that, firstly, the song is played in a 7/8 time signature instead of the usual 4/4 time signature, and secondly, that it is much more richly harmonized.

The relational object, however, is not a text; there is no physical object to which you could point. Nevertheless, it seems to be an objectively given point of reference. What establishes objectivity despite all the variance is therefore not the physicality of the object, but rather these two properties: repeatability and sharedness with others.*** Sharedness, or rather, repeatability by others, plays the crucial role here. Why? Because without social sharedness, I could not be corrected in my repetitions. Alone, I could mistake any nonsense for a repetition.

Only in agreement with others can there be anything like a correct or genuine repetition. (This is the consequence I draw from Wittgenstein’s private language argument.) Only when you affirm that the 7/8 version is also “Bruder Jakob” is it considered “Bruder Jakob.”

For precisely this reason, in singing as in reading, it is not the physicality, but the shared repetition, that is, the correct repetition, that establishes objectivity. What singing and reading have in common here is that they are embedded in a long history of social interaction.

Like reading, you may have first experienced singing by being sung to, by it being repeated, embodied, shared, and perhaps even ritualized. Just as you were initially read to repeatedly in typical situations: reading and listening were embodied, perhaps in bed with a book and pictures. Shared, that is, perhaps by your mother, your father, perhaps with other children. And perhaps as a bedtime ritual that has shaped your expectations and structured the evening. Singing, like reading, is inscribed within you as a ritual, so to speak. That’s how we learn it. Reading is embedded in these biographical narratives, not just in an abstract tradition.

In my opinion, it is precisely these factors, and especially repeatability and shared experience, that lend objectivity to what is read, objectivity to which the text, much like a song, ‘in itself,’ offers only a possibility.

So what does this analogy with singing offer us? Firstly, it clarifies how, with regard to the factors of objectivity—repeatability and shareability—we ascribe an objectivity to texts themselves, which we actually derive from their social embeddedness; unlike texts, songs don’t have any discernible objects. Secondly, it points us to crucial social sites and situations: if we want to seriously engage with reading, with the negotiation of meaning among readers, then we must go to the places where this actually happens. Accordingly, a philosophical engagement with an 18th-century text would require us to examine epistolary culture, salons, and, more generally, the establishment of conversation as a site of thought.**** While it is quite natural for many of us to have conversations about texts, this form, conversation itself, arose at some point and—this is one of my conclusions from my central thesis—plays a decisive role in the meaning and use of texts pertaining to certain genres. Alongside peer-review processes, conversation is a crucial space where, not least, philosophical reading culture takes place. Accordingly, you can locate the different interpretations of Wittgenstein in very different discussions or communities: the positivist interpretation in the Vienna Circle, the mystical one around Elisabeth Anscombe, the therapeutic one, for example, around Peter Hacker.

The basic idea is thus: The objectivity attributed to texts is an illusion, suggested by properties of reading (actualizing affordances in the text) and projected back onto the text. Reading as a social practice is (like singing) repetitive and socially diverse. It is not the text, but social reading that creates objectivity.

As Suresh Canagarajah puts it: “Meaning has to be co-constructed through collaborative strategies, treating grammars and texts as affordances rather than containers of meaning. Interlocutors draw from other affordances, too, such as the setting, objects, gestures, and multisensory resources from the ecology. Thus, meaning does not reside in the grammars they bring to the encounter, but in the negotiated practice of aligning with each other in the context of diverse affordances for communication. In the global contact zone, interlocutors seek to understand the plurality of norms in a communicative situation and expand their repertoires, without assuming that they can rely solely on the knowledge or skills they bring with them to achieve communicative success.” This is precisely the point I am also trying to make: texts do not contain meanings, but rather offer affordances or possibilities.

4 The Consequences of the Illusion: The Degeneration of Objectivism into Mechanical Reading

If what has been said is true, then it is also possible that certain reading cultures will disappear or change. However, this does not necessarily mean that we will unlearn how to read, but perhaps only that the way we read and the places where meanings are negotiated can change. This is noticeable not only with regard to recent technologies, but also in everyday practice, especially in teaching. I believe, however, that the still widespread illusion that texts themselves are objective is leading to a degeneration in our reading culture. And here I come back to my initial observation that we might be living a scholar’s life with a chapter by Aristotle, while we spend only two hours on a student assignment.

This practice, which, incidentally, is also linked to increasing literacy, the so-called mass university, and the simultaneously stagnating number of lecturers, is initially perceived as stemming from external political pressure—and yet, it is increasingly becoming so entrenched that the guidelines for student text production—for instance, in the Netherlands and Great Britain—are themselves so schematic that one actually believes one can judge after 20 minutes of reading whether the requirements have been met. Such a mechanization of writing and reading is, of course, only justifiable if one believes that texts themselves are objective entities that are accordingly either good or bad. This mechanization is, incidentally, not a consequence of ChatGPT. Rather, it is the other way round: a reading culture that is changing in this direction consistently learns to use such technology.

From the Netherlands, I know that the mechanization of reading is already taking hold in elementary schools, where, from the very beginning, students are taught reading comprehension (begrijpend lezen) in order to test their knowledge of text structure in multiple-choice tests, and then people wonder why most young people have no interest in reading.

It seems that such uninspired role models lead to readers who, in turn, produce texts for exams that are hardly ever read. So why should anyone bother writing them themselves? Why bother reading them?

All of these are developments that, at least at universities, cannot be described independently of the introduction of New Public Management in the 1980s. (What good is it to tell a student to look at the text to understand it if there is hardly any interest in doing so outside of class? No, universities are not ivory towers; rather, cultural deserts have formed around them, in which we see primarily stakeholders instead of interpretive communities. But this criticism is nothing new and also a bit one-sided.)

Because, of course, there are places where the meaning of texts is still negotiated. We find them at literature and even philosophy festivals, on social media under #booktok, in often student-led reading groups, and, of course, in our teaching and research events. Here, reading is sometimes so explicitly social that it is actually performed. This, too, is not entirely new, of course. If we are interested in the foundations of reading, we have to go to these places. What is particularly interesting for our reading culture, I think, is that Large Language Models erode not only trust in the authenticity but also in the objectivity of texts. We are experiencing a massive desacralization of the text. Because unlike the divine authority presumed behind biblical texts, we now constantly suspect a deceptive demon. Accordingly, I believe that the academic rebellion against this desacralization is also a rebellion against the death of the illusion that texts themselves possess inherent quality. To name this desacralization does not mean falling for the grand promises of relevant AI product manufacturers. But we can also use this technology to sensitize ourselves to the fact that it is not the texts themselves, but our reading, our singing, our rituals that create meaning and make it something shared.

5 A Few Conclusions Regarding the Practice of Reading

What are the practical implications of these insights? How can we improve reading practices through such findings? Firstly, I would like to remind you that this research project on reading as a social practice is only just beginning. But if the meaning of texts in reading is essentially unlocked through the interactions between readers, then it helps not to stare at the text itself, but to always ask ourselves first: What do I expect from this text? What am I assuming it’s supposed to tell me? Is it supposed to provide me with an argument for something? What do I do if the text doesn’t meet my expectations? Should I humbly assume that I’m too stupid for it? That I don’t belong to the club of readers who say they understand or even love such texts? And why is this tome even on my desk or in my Adobe Reader?

Once you’ve confused yourself enough with these questions, you can actually look at the text and see what’s written there without immediately searching for “the argument”. People always say you shouldn’t just read, but read thoroughly: But what does “thoroughly” mean? Should I choose lots of colors to highlight the incomprehensible passages? Seriously: This instruction is about as helpful as telling you to concentrate. How do I do that? Stare into space and roll my eyes cleverly? – How do you even know when you’ve concentrated well enough? If you can say something that your conversation partner nods to politely in agreement? I can order from a menu, I can sound good with a poem, but what do I do with a philosophical text? When have I truly understood something? We can still only see this through conversation. – Is that enough, though?

Well, a fundamental insight that follows from these theoretical considerations regarding reading is that a philosophical text offers possibilities or affordances, and thus always different ways of reading it. It is a myth of completeness, particularly prevalent in analytic philosophy, that all implicit possibilities can simply be made explicit. Such completeness contradicts the necessary openness or underdetermination in texts. Think again of Wittgenstein’s famous quote. Another very memorable illustration of this is the duck-rabbit, which, in terms of possibility, remains precisely both. My project would therefore not be to reconstruct the one true argument, but rather to reveal different and potentially conflicting possibilities. Accordingly, one must accept that the text allows for various interpretations, which are only gained within different interpretive communities.

At this point, philosophers usually develop a typical fear of relativism. However, as Stanley Fish already noted, emphasizing possibilities is not about a relativistic position, but about plurality. Such plurality, however, by no means leads to arbitrariness. But what, then, are the limits to this space of possibilities? First, there are of course propositional limits: you cannot say that a text asserts non-p if it explicitly asserts p. Unless, of course, you perceive signs of irony. Here, the matter of limits becomes difficult again; and you will ultimately decide one way or the other. Furthermore, there are situation-specific conditions of appropriateness. If someone asks for directions to the train station, it’s not appropriate to respond, paraphrasing Robert Frost, by musing about less-traveled paths. Just as one shouldn’t sing Frère Jaques at an inaugural lecture or at a funeral. Or should one? Of course, we can break with conventions. For example, it’s entirely up to you whether you sing the song in 4/4 or 7/8 time signature, or even reharmonize it psychedelically with suspended chords. Convention gives you something to play with, or sing with.

Accordingly, Alva Noë makes a crucial point when he compares philosophical texts to scores for thinking, which can also be interpreted in very different ways:

“What the philosopher establishes in their labors are not truths or theses, but rather scores, scores for thinking with. … The philosophy lives for us like a musical score that we – students and colleagues, a community – can either play or refuse to play, or wish that we could figure out how to play, or, alternatively, wish that we could find a way to stop playing.”

I would simply add that, analogous to musical notation, philosophical texts can give rise to a multitude of interpretations. Here we don’t just have a single, obvious interpretation of a duck-rabbit, but a whole zoo with possible shifts in perspective.

Now, that may all sound very nice. But one mustn’t forget that interpretations aren’t chosen arbitrarily, but primarily with regard to social affiliation. If you choose an interpretation, you might belong to a club that’s currently out of fashion. The problem with my musings, then, is that they can be received in very different ways. Academics, in particular, fear reputational damage; therefore, they are very reluctant to admit their lack of understanding. “I don’t understand this text” usually is taken to mean something like, “The author is too stupid to explain it to me properly.” If, on the other hand, one can express genuine and sincere incomprehension, one has truly made progress. But such humility is something one has to be able to afford, so to speak. Therefore, it’s not enough to simply seek conversation; one must overcome one’s shame. You can’t learn to sing well if you’re too afraid of singing off-key.

But at some point, you can truly begin to name the difficult parts and ask yourself exactly where and why you’re stuck. Reflected confusion then becomes a genuine conversation starter. Because if a text offers the possibility of understanding it, it also offers the possibility of not understanding it.

____

* Thanks to Arnd Pollmann for pointing out this passage.

** I borrow this classification from Charlie Huenemann, but I forget in which of his posts it was introduced.

*** See on repetition in music and language Elizabeth Hellmuth Margulis’ On Repeat as well as Bente Oost’s vlog on this blog.

**** Thanks to Miriam Aiello for conversations on the topic of conversation.

No, this is not about the decline of the occident, just a note about a curiosity in academic philosophy. Philosophy is a discipline in which reading is a key competence, not least in that philosophical exchange often focuses on the precise formulation of a premise or an argument. But while there are numerous guides on writing philosophy or on reconstructing arguments, there is next to nothing on reading. Given that different people reading philosophy often end up with contrary takes on texts (be they historical or contemporary) and given that much energy is spent on singling out proper takes, it is astonishing (to put it mildly) that there is so little reflection on reading. Or perhaps not? One of the first things I took in as a philosophy student is that philosophy is, by and large, an implicit culture where the rules of the game are not expressed but handed down by emulation. However, reading practices are not just about the rules of a specific game. Arguably, such practices make the often unreflected fabric of our intuitions and ways of life. So understanding our (current as opposed to some other) reading practice will not only yield an understanding of our particular ways but also of why we prefer certain texts and forms of reading over others in the first place. So why do we care so little? Preparing a larger project and a workshop on the issue of reading, I would like to share some encounters and musings.

Text production. – Having been educated as a historian of philosophy, first as a medievalist and, then, as an early-modernist, I have always been intrigued by the fact that texts have to be produced (before they can be consumed) by the historian. Becoming aware that the texts we read in books have come a long way (from picking and transcribing manuscripts into readable Latin, to a critical edition after choosing a leading manuscript, while referencing deviating manuscripts and sources, to a translation, a translation competing with other translations, being published), the material basis of reading and its availability, for whatever ideological or financial reasons, was already a thing to be pondered on. So, long before we can set eyes on a text, a number of decisions are made that include and exclude authors and whole traditions. When colleages say, they alter the canon by putting a new text on the reading list, I often want to ask why they think that the text is not already part of the canon, especially if it’s (fairly) readily available. But that’s by the by. The upshot is that reading presupposes the very availability of texts, and that’s a highly ideological matter already (or else tell me why everyone referencing medieval philosophy just references Thomas Aquinas).

“Why bother? – I just read.” – Still at Groningen University, I once asked colleagues whether we shouldn’t compose a reading guide detailaing how they approach their respective readings. The standard response was: “Why? I just read. There’s nothing much to say.” Asking further, they would often detail ways of reconstructing and formalizing arguments that were at once highly technical and subject to change. So, if you’re one of these poor souls thinking that there is one good way of reconstructing an argument in a text, just forget about it! It’s hard and ongoing work – no matter whether the text is by Plato or Ted Sider. The bottom line is that, no matter whether you’re a historian or a staunch analytic philosopher, any reading is highly contestable. Shouldn’t this fact give rise to a discussion of how readings are or should be constrained? You’d think so, wouldn’t you? But there is literally nowt (which is why I thought it timely to run a conference on the why and how of doing history of philosophy).

Texts versus arguments. – In my first year as a student of philosophy, I was asked to reconstruct an argument by Leibniz. We were supposed to use decimal numbers. My instructor (for those who care it was Lothar Kreimendahl) was not happy: Rather than presenting a list of numbered propositions, I gave what is nowadays called a narrative. I proudly rejected being graded for my supposed failure. But what this taught me was that the distance between the the text and its reconstruction can be very long and varied. I got out ok, but I still worry about the poor souls who think there is one true reconstruction or reading of a text. The upshot is that there is no clear way of getting from the text to a reconstruction of an argument. In fact, the text has to be seen in a certain context as speaking to a certain issue in the first place. But how is that known or established? Well, first of all it needs to be established, be it by your instructor, interlocutor or the stuff you’re reading about it. So there is no argument to be read off a text. The way of dealing with texts (as e.g. containers of arguments) has to be established already.

Who cares? – Of course, scholars dealing with different periods in the history of philosophy or reading cultures have to care. Doina-Cristina Rusu and Dana Jalobeanu, for instance, taught me that recipes and descriptions of experiments form a specific reading culture that needs to be studied in its own right in order to understand how things were understood and transmitted. The same goes for current philosophy, or so I think, but the implicit culture suggests otherwise. Yet, as long as this culture or cultures remain implicit, I think we’re not even doing proper philosophy (if doing philosophy includes studying the preconditions of one’s thought). So my guess is that we’re mostly doing what Kuhn took to be normal science. We unthinkingly emulate our teachers. But while doing so, we encounter the uncanny: students who don’t care about reading and even produce their writings with the help of LLMs. But funnily enough, in this very situation we insist on a proper distinction between the text reflected on and the text written. My hunch is that it’s our implicit reading culture that leaves us with very few responses to such misgivings. The bottom line is: We need an idea of how texts relate to thoughts etc. in order to handle the situation. But for that, we need to understand the preconditions of reading.

Not even didactics of philosophy? – While practitioners in different philologies and related disciplines seem to care greatly about reading practices, in philosophy the situation is so bad that not even didactics of philosophy have much to offer. Really? Obviously, or so I thought, philosophy teacher education would go into reading, no? Talking to some highly accomplished and experienced scholars in didactics like Vanessa Albus or Laura Martena, I learned that reading is not only thought of as problematic but often even actively pushed to the fringes in teaching philosophy. But why? Well, part of the reason is that philosophy is already taught in primary school, a level at which you won’t rely on texts. For later stages, a common resource is provided, amongst other things, by so-called sets of post-texts (Nach-Texte) which present summaries of a philosopher’s opinion (as one among other opinions). This way, a text by Kant might be reduced to the opinion of a talkshow guest in class. Not quite as drastic, but perhaps similar in spirit is Jonathan Bennett’s famous initiative of providing translations of classic texts from the early modern period from English into English, “prepared with a view to making them easier to read while leaving intact the main arguments, doctrines, and lines of thought.” This way you get, for instance, a simplified version of Locke’s Essay. (More than 15 years ago, I was involved in a translation project in which someone mistook these translations for proper texts and handed in a translation of a classic text from the simplified English into German. Luckily, we caught this in time.) The upshot is that (again, with notable exceptions) even didactics makes do with the miraculous move from the textual surface to the supposed argument or position – without much thought about interference by different possible reading strategies. At the same time, didactics is, strangely enough, a fairly young discipline that was still pushed to the sidelines during my student days.